#include "DiffeGradientUtils.h"

Public Member Functions | |

| llvm::AllocaInst * | getDifferential (llvm::Value *val) |

| llvm::Value * | diffe (llvm::Value *val, llvm::IRBuilder<> &BuilderM) |

| llvm::SmallVector< llvm::SelectInst *, 4 > | addToDiffe (llvm::Value *val, llvm::Value *dif, llvm::IRBuilder<> &BuilderM, llvm::Type *addingType, llvm::ArrayRef< llvm::Value * > idxs={}, llvm::Value *mask=nullptr) |

| Returns created select instructions, if any. | |

| llvm::SmallVector< llvm::SelectInst *, 4 > | addToDiffe (llvm::Value *val, llvm::Value *dif, llvm::IRBuilder<> &BuilderM, llvm::Type *addingType, unsigned start, unsigned size, llvm::ArrayRef< llvm::Value * > idxs={}, llvm::Value *mask=nullptr, size_t ignoreFirstSlicesToDiff=0) |

| Returns created select instructions, if any. | |

| void | setDiffe (llvm::Value *val, llvm::Value *toset, llvm::IRBuilder<> &BuilderM) |

| llvm::CallInst * | freeCache (llvm::BasicBlock *forwardPreheader, const SubLimitType &sublimits, int i, llvm::AllocaInst *alloc, llvm::Type *myType, llvm::ConstantInt *byteSizeOfType, llvm::Value *storeInto, llvm::MDNode *InvariantMD) override |

| If an allocation is requested to be freed, this subclass will be called to chose how and where to free it. | |

| void | addToInvertedPtrDiffe (llvm::Instruction *orig, llvm::Value *origVal, llvm::Type *addingType, unsigned start, unsigned size, llvm::Value *origptr, llvm::Value *dif, llvm::IRBuilder<> &BuilderM, llvm::MaybeAlign align=llvm::MaybeAlign(), llvm::Value *mask=nullptr) |

| align is the alignment that should be specified for load/store to pointer | |

| void | addToInvertedPtrDiffe (llvm::Instruction *orig, llvm::Value *origVal, TypeTree vd, unsigned size, llvm::Value *origptr, llvm::Value *prediff, llvm::IRBuilder<> &Builder2, llvm::MaybeAlign align=llvm::MaybeAlign(), llvm::Value *premask=nullptr) |

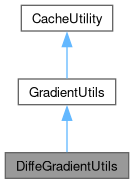

Public Member Functions inherited from GradientUtils Public Member Functions inherited from GradientUtils | |

| llvm::SmallVector< llvm::OperandBundleDef, 2 > | getInvertedBundles (llvm::CallInst *orig, llvm::ArrayRef< ValueType > types, llvm::IRBuilder<> &Builder2, bool lookup, const llvm::ValueToValueMapTy &available=llvm::ValueToValueMapTy()) |

| bool | usedInRooting (const llvm::CallBase *orig, llvm::ArrayRef< ValueType > types, const llvm::Value *val, bool shadow) const |

| llvm::Value * | getNewIfOriginal (llvm::Value *originst) const |

| llvm::Value * | ompThreadId () |

| llvm::Value * | ompNumThreads () |

| llvm::Value * | getOrInsertTotalMultiplicativeProduct (llvm::Value *val, LoopContext &lc) |

| llvm::Value * | getOrInsertConditionalIndex (llvm::Value *val, LoopContext &lc, bool pickTrue) |

| bool | assumeDynamicLoopOfSizeOne (llvm::Loop *L) const override |

| llvm::DebugLoc | getNewFromOriginal (const llvm::DebugLoc L) const |

| llvm::Value * | getNewFromOriginal (const llvm::Value *originst) const |

| llvm::Instruction * | getNewFromOriginal (const llvm::Instruction *newinst) const |

| llvm::BasicBlock * | getNewFromOriginal (const llvm::BasicBlock *newinst) const |

| llvm::Value * | hasUninverted (const llvm::Value *inverted) const |

| llvm::BasicBlock * | getOriginalFromNew (const llvm::BasicBlock *newinst) const |

| llvm::Value * | isOriginal (const llvm::Value *newinst) const |

| llvm::Instruction * | isOriginal (const llvm::Instruction *newinst) const |

| llvm::BasicBlock * | isOriginal (const llvm::BasicBlock *newinst) const |

| void | computeForwardingProperties (llvm::Instruction *V) |

| void | computeGuaranteedFrees () |

| void | replaceAndRemoveUnwrapCacheFor (llvm::Value *A, llvm::Value *B) |

| llvm::BasicBlock * | addReverseBlock (llvm::BasicBlock *currentBlock, llvm::Twine const &name, bool forkCache=true, bool push=true) |

| bool | legalRecompute (const llvm::Value *val, const llvm::ValueToValueMapTy &available, llvm::IRBuilder<> *BuilderM, bool reverse=false, bool legalRecomputeCache=true) const |

| bool | shouldRecompute (const llvm::Value *val, const llvm::ValueToValueMapTy &available, llvm::IRBuilder<> *BuilderM) |

| Given the option to recompute a value or re-use an old one, return true if it is faster to recompute this value from scratch. | |

| void | replaceAWithB (llvm::Value *A, llvm::Value *B, bool storeInCache=false) override |

| Replace this instruction both in LLVM modules and any local data-structures. | |

| void | erase (llvm::Instruction *I) override |

| Erase this instruction both from LLVM modules and any local data-structures. | |

| void | eraseWithPlaceholder (llvm::Instruction *I, llvm::Instruction *orig, const llvm::Twine &suffix="_replacementA", bool erase=true) |

| void | setTape (llvm::Value *newtape) |

| void | dumpPointers () |

| int | getIndex (std::pair< llvm::Instruction *, CacheType > idx, const std::map< std::pair< llvm::Instruction *, CacheType >, int > &mapping, llvm::IRBuilder<> &) |

| int | getIndex (std::pair< llvm::Instruction *, CacheType > idx, std::map< std::pair< llvm::Instruction *, CacheType >, int > &mapping, llvm::IRBuilder<> &) |

| llvm::Value * | cacheForReverse (llvm::IRBuilder<> &BuilderQ, llvm::Value *malloc, int idx, bool replace=true) |

| llvm::ArrayRef< llvm::WeakTrackingVH > | getTapeValues () const |

| unsigned | getWidth () |

| GradientUtils (EnzymeLogic &Logic, llvm::Function *newFunc_, llvm::Function *oldFunc_, llvm::TargetLibraryInfo &TLI_, TypeAnalysis &TA_, TypeResults TR_, llvm::ValueToValueMapTy &invertedPointers_, const llvm::SmallPtrSetImpl< llvm::Value * > &constantvalues_, const llvm::SmallPtrSetImpl< llvm::Value * > &activevals_, DIFFE_TYPE ReturnActivity, bool shadowReturnUsed, llvm::ArrayRef< DIFFE_TYPE > ArgDiffeTypes_, llvm::ValueMap< const llvm::Value *, AssertingReplacingVH > &originalToNewFn_, DerivativeMode mode, bool runtimeActivity, bool strongZero, unsigned width, bool omp) | |

| DIFFE_TYPE | getDiffeType (llvm::Value *v, bool foreignFunction) const |

| DIFFE_TYPE | getReturnDiffeType (llvm::Value *orig, bool *primalReturnUsedP, bool *shadowReturnUsedP, DerivativeMode cmode) const |

| DIFFE_TYPE | getReturnDiffeType (llvm::Value *orig, bool *primalReturnUsedP, bool *shadowReturnUsedP) const |

| llvm::MDNode * | getDerivativeAliasScope (const llvm::Value *origptr, ssize_t newptr) |

| void | setPtrDiffe (llvm::Instruction *orig, llvm::Value *ptr, llvm::Value *newval, llvm::IRBuilder<> &BuilderM, llvm::MaybeAlign align, unsigned start, unsigned size, bool isVolatile, llvm::AtomicOrdering ordering, llvm::SyncScope::ID syncScope, llvm::Value *mask, llvm::ArrayRef< llvm::Metadata * > noAlias, llvm::ArrayRef< llvm::Metadata * > scopes, bool needs_post_cache=false) |

| llvm::BasicBlock * | getReverseOrLatchMerge (llvm::BasicBlock *BB, llvm::BasicBlock *branchingBlock) |

| Given an edge from BB to branchingBlock get the corresponding block to branch to in the reverse pass. | |

| void | forceContexts () |

| void | computeMinCache () |

| bool | isOriginalBlock (const llvm::BasicBlock &BB) const |

| void | eraseFictiousPHIs () |

| void | forceActiveDetection () |

| bool | isConstantValue (llvm::Value *val) const |

| bool | isConstantInstruction (const llvm::Instruction *inst) const |

| bool | getContext (llvm::BasicBlock *BB, LoopContext &lc) |

| void | forceAugmentedReturns () |

| llvm::Value * | unwrapM (llvm::Value *const val, llvm::IRBuilder<> &BuilderM, const llvm::ValueToValueMapTy &available, UnwrapMode unwrapMode, llvm::BasicBlock *scope=nullptr, bool permitCache=true) override final |

| if full unwrap, don't just unwrap this instruction, but also its operands, etc | |

| void | ensureLookupCached (llvm::Instruction *inst, bool shouldFree=true, llvm::BasicBlock *scope=nullptr, llvm::MDNode *TBAA=nullptr) |

| llvm::Value * | fixLCSSA (llvm::Instruction *inst, llvm::BasicBlock *forwardBlock, bool legalInBlock=false) |

| llvm::Value * | lookupM (llvm::Value *val, llvm::IRBuilder<> &BuilderM, const llvm::ValueToValueMapTy &incoming_availalble=llvm::ValueToValueMapTy(), bool tryLegalRecomputeCheck=true, llvm::BasicBlock *scope=nullptr) override |

| High-level utility to get the value an instruction at a new location specified by BuilderM. | |

| llvm::Value * | invertPointerM (llvm::Value *val, llvm::IRBuilder<> &BuilderM, bool nullShadow=false) |

| void | branchToCorrespondingTarget (llvm::BasicBlock *ctx, llvm::IRBuilder<> &BuilderM, const std::map< llvm::BasicBlock *, std::vector< std::pair< llvm::BasicBlock *, llvm::BasicBlock * > > > &targetToPreds, const std::map< llvm::BasicBlock *, llvm::PHINode * > *replacePHIs=nullptr) |

| Given a map of edges we could have taken to desired target, compute a value that determines which target should be branched to. | |

| void | getReverseBuilder (llvm::IRBuilder<> &Builder2, bool original=true) |

| void | getForwardBuilder (llvm::IRBuilder<> &Builder2) |

| llvm::Type * | getShadowType (llvm::Type *ty) |

| template<typename Func , typename... Args> | |

| llvm::Value * | applyChainRule (llvm::Type *diffType, llvm::IRBuilder<> &Builder, Func rule, Args... args) |

| Unwraps a vector derivative from its internal representation and applies a function f to each element. | |

| template<typename Func , typename... Args> | |

| void | applyChainRule (llvm::IRBuilder<> &Builder, Func rule, Args... args) |

| Unwraps a vector derivative from its internal representation and applies a function f to each element. | |

| template<typename Func > | |

| llvm::Value * | applyChainRule (llvm::Type *diffType, llvm::ArrayRef< llvm::Constant * > diffs, llvm::IRBuilder<> &Builder, Func rule) |

| Unwraps an collection of constant vector derivatives from their internal representations and applies a function f to each element. | |

| bool | needsCacheWholeAllocation (const llvm::Value *V) const |

Public Member Functions inherited from CacheUtility Public Member Functions inherited from CacheUtility | |

| virtual | ~CacheUtility () |

| bool | getContext (llvm::BasicBlock *BB, LoopContext &loopContext, bool ReverseLimit) |

| Given a BasicBlock BB in newFunc, set loopContext to the relevant contained loop and return true. | |

| bool | isInstructionUsedInLoopInduction (llvm::Instruction &I) |

| Return whether the given instruction is used as necessary as part of a loop context This includes as the canonical induction variable or increment. | |

| llvm::AllocaInst * | getDynamicLoopLimit (llvm::Loop *L, bool ReverseLimit=true) |

| void | dumpScope () |

| Print out all currently cached values. | |

| unsigned | getCacheAlignment (unsigned bsize) const |

| SubLimitType | getSubLimits (bool inForwardPass, llvm::IRBuilder<> *RB, LimitContext ctx, llvm::Value *extraSize=nullptr) |

| Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader. | |

| llvm::AllocaInst * | createCacheForScope (LimitContext ctx, llvm::Type *T, llvm::StringRef name, bool shouldFree, bool allocateInternal=true, llvm::Value *extraSize=nullptr) |

| Create a cache of Type T at the given LimitContext. | |

| void | storeInstructionInCache (LimitContext ctx, llvm::IRBuilder<> &BuilderM, llvm::Value *val, llvm::AllocaInst *cache, llvm::MDNode *TBAA=nullptr) |

| Given an allocation defined at a particular ctx, store the value val in the cache at the location defined in the given builder. | |

| void | storeInstructionInCache (LimitContext ctx, llvm::Instruction *inst, llvm::AllocaInst *cache, llvm::MDNode *TBAA=nullptr) |

| Given an allocation defined at a particular ctx, store the instruction in the cache right after the instruction is executed. | |

| llvm::Value * | getCachePointer (llvm::Type *T, bool inForwardPass, llvm::IRBuilder<> &BuilderM, LimitContext ctx, llvm::Value *cache, bool storeInInstructionsMap, const llvm::ValueToValueMapTy &available, llvm::Value *extraSize) |

| Given an allocation specified by the LimitContext ctx and cache, compute a pointer that can hold the underlying type being cached. | |

| llvm::Value * | lookupValueFromCache (llvm::Type *T, bool inForwardPass, llvm::IRBuilder<> &BuilderM, LimitContext ctx, llvm::Value *cache, bool isi1, const llvm::ValueToValueMapTy &available, llvm::Value *extraSize=nullptr, llvm::Value *extraOffset=nullptr) |

| Given an allocation specified by the LimitContext ctx and cache, lookup the underlying cached value. | |

Static Public Member Functions | |

| static DiffeGradientUtils * | CreateFromClone (EnzymeLogic &Logic, DerivativeMode mode, bool runtimeActivity, bool strongZero, unsigned width, llvm::Function *todiff, llvm::TargetLibraryInfo &TLI, TypeAnalysis &TA, FnTypeInfo &oldTypeInfo, DIFFE_TYPE retType, bool shadowReturnArg, bool diffeReturnArg, llvm::ArrayRef< DIFFE_TYPE > constant_args, bool returnTape, bool returnPrimal, llvm::Type *additionalArg, bool omp) |

Static Public Member Functions inherited from GradientUtils Static Public Member Functions inherited from GradientUtils | |

| static GradientUtils * | CreateFromClone (EnzymeLogic &Logic, bool runtimeActivity, bool strongZero, unsigned width, llvm::Function *todiff, llvm::TargetLibraryInfo &TLI, TypeAnalysis &TA, FnTypeInfo &oldTypeInfo, DIFFE_TYPE retType, llvm::ArrayRef< DIFFE_TYPE > constant_args, bool returnUsed, bool shadowReturnUsed, std::map< AugmentedStruct, int > &returnMapping, bool omp) |

| static llvm::Constant * | GetOrCreateShadowConstant (RequestContext context, EnzymeLogic &Logic, llvm::TargetLibraryInfo &TLI, TypeAnalysis &TA, llvm::Constant *F, DerivativeMode mode, bool runtimeActivity, bool strongZero, unsigned width, bool AtomicAdd) |

| static llvm::Constant * | GetOrCreateShadowFunction (RequestContext context, EnzymeLogic &Logic, llvm::TargetLibraryInfo &TLI, TypeAnalysis &TA, llvm::Function *F, DerivativeMode mode, bool runtimeActivity, bool strongZero, unsigned width, bool AtomicAdd) |

| static llvm::Type * | getShadowType (llvm::Type *ty, unsigned width) |

| static llvm::Value * | extractMeta (llvm::IRBuilder<> &Builder, llvm::Value *Agg, unsigned off, const llvm::Twine &name="") |

| Helper routine to extract a nested element from a struct/array. This is. | |

| static llvm::Value * | extractMeta (llvm::IRBuilder<> &Builder, llvm::Value *Agg, llvm::ArrayRef< unsigned > off, const llvm::Twine &name="", bool fallback=true) |

| Helper routine to extract a nested element from a struct/array. Unlike the. | |

| static llvm::Type * | extractMeta (llvm::Type *T, llvm::ArrayRef< unsigned > off) |

| Helper routine to get the type of an extraction. | |

| static llvm::Value * | recursiveFAdd (llvm::IRBuilder<> &B, llvm::Value *lhs, llvm::Value *rhs, llvm::ArrayRef< unsigned > lhs_off={}, llvm::ArrayRef< unsigned > rhs_off={}, llvm::Value *prev=nullptr, bool vectorLayer=false) |

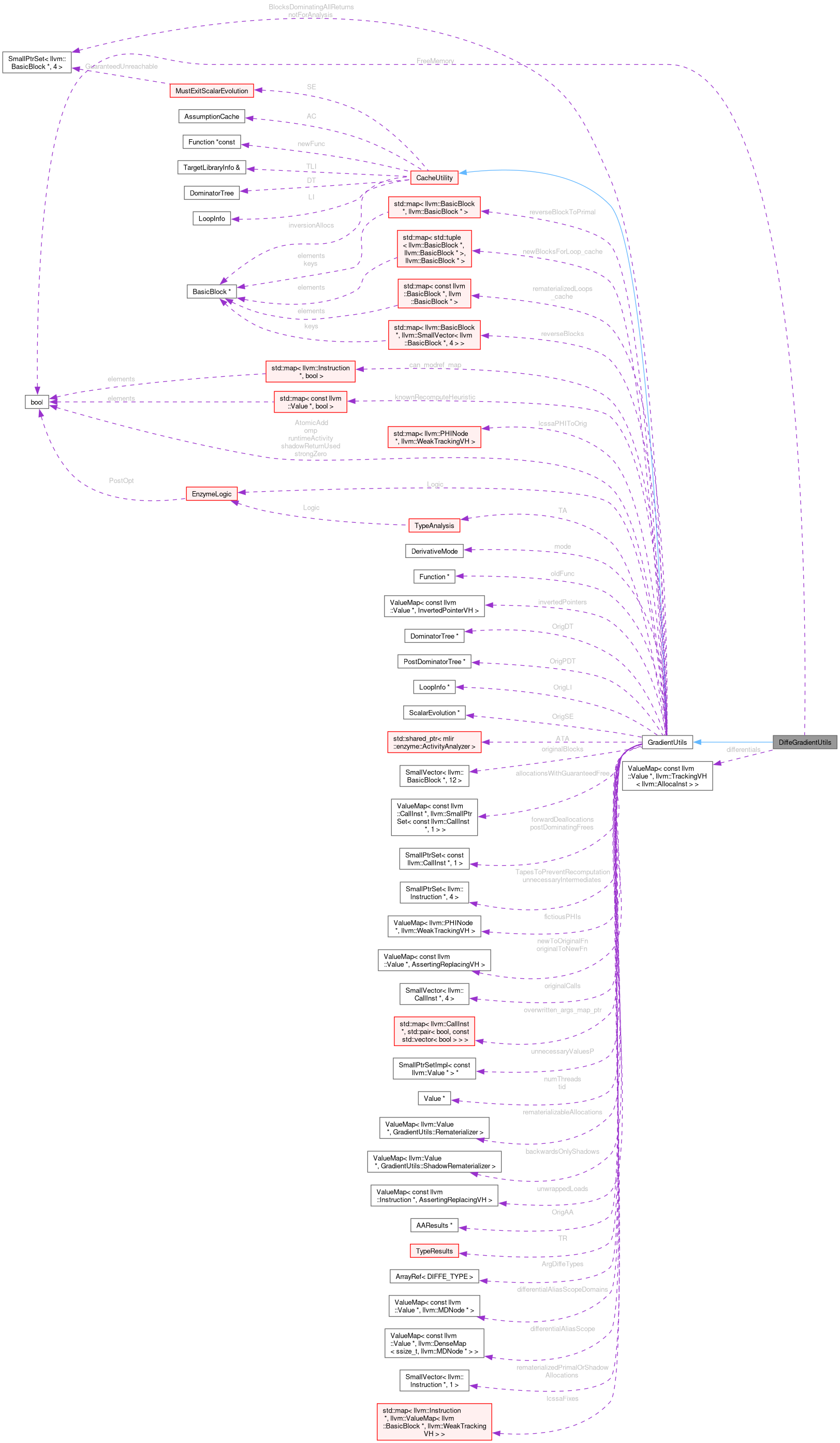

Public Attributes | |

| bool | FreeMemory |

| Whether to free memory in reverse pass or split forward. | |

| llvm::ValueMap< const llvm::Value *, llvm::TrackingVH< llvm::AllocaInst > > | differentials |

Public Attributes inherited from GradientUtils Public Attributes inherited from GradientUtils | |

| EnzymeLogic & | Logic |

| bool | AtomicAdd |

| DerivativeMode | mode |

| llvm::Function * | oldFunc |

| llvm::ValueMap< const llvm::Value *, InvertedPointerVH > | invertedPointers |

| llvm::DominatorTree * | OrigDT |

| llvm::PostDominatorTree * | OrigPDT |

| llvm::LoopInfo * | OrigLI |

| llvm::ScalarEvolution * | OrigSE |

| llvm::SmallPtrSet< llvm::BasicBlock *, 4 > | BlocksDominatingAllReturns |

| (Original) Blocks which dominate all returns | |

| llvm::SmallPtrSet< llvm::BasicBlock *, 4 > | notForAnalysis |

| std::shared_ptr< ActivityAnalyzer > | ATA |

| llvm::SmallVector< llvm::BasicBlock *, 12 > | originalBlocks |

| llvm::ValueMap< const llvm::CallInst *, llvm::SmallPtrSet< const llvm::CallInst *, 1 > > | allocationsWithGuaranteedFree |

| Allocations which are known to always be freed before the reverse, to the list of frees that must apply to this allocation. | |

| llvm::SmallPtrSet< const llvm::CallInst *, 1 > | postDominatingFrees |

| Frees which can always be eliminated as the post dominate an allocation (which will itself be freed). | |

| llvm::SmallPtrSet< const llvm::CallInst *, 1 > | forwardDeallocations |

| Deallocations that should be kept in the forward pass because they deallocation memory which isn't necessary for the reverse pass. | |

| std::map< llvm::BasicBlock *, llvm::SmallVector< llvm::BasicBlock *, 4 > > | reverseBlocks |

| Map of primal block to corresponding block(s) in reverse. | |

| std::map< llvm::BasicBlock *, llvm::BasicBlock * > | reverseBlockToPrimal |

| Map of block in reverse to corresponding primal block. | |

| llvm::SmallPtrSet< llvm::Instruction *, 4 > | TapesToPreventRecomputation |

| A set of tape extractions to enforce a cache of rather than attempting to recompute. | |

| llvm::ValueMap< llvm::PHINode *, llvm::WeakTrackingVH > | fictiousPHIs |

| llvm::ValueMap< const llvm::Value *, AssertingReplacingVH > | originalToNewFn |

| llvm::ValueMap< const llvm::Value *, AssertingReplacingVH > | newToOriginalFn |

| llvm::SmallVector< llvm::CallInst *, 4 > | originalCalls |

| llvm::SmallPtrSet< llvm::Instruction *, 4 > | unnecessaryIntermediates |

| const std::map< llvm::Instruction *, bool > * | can_modref_map |

| const std::map< llvm::CallInst *, std::pair< bool, const std::vector< bool > > > * | overwritten_args_map_ptr |

| const llvm::SmallPtrSetImpl< const llvm::Value * > * | unnecessaryValuesP |

| llvm::Value * | tid |

| llvm::Value * | numThreads |

| llvm::ValueMap< llvm::Value *, Rematerializer > | rematerializableAllocations |

| llvm::ValueMap< llvm::Value *, ShadowRematerializer > | backwardsOnlyShadows |

| Only loaded from and stored to (not captured), mapped to the stores (and memset). | |

| std::map< const llvm::Value *, bool > | knownRecomputeHeuristic |

| llvm::ValueMap< const llvm::Instruction *, AssertingReplacingVH > | unwrappedLoads |

| llvm::AAResults * | OrigAA |

| TypeAnalysis & | TA |

| TypeResults | TR |

| bool | omp |

| bool | runtimeActivity |

| bool | strongZero |

| bool | shadowReturnUsed |

| llvm::ArrayRef< DIFFE_TYPE > | ArgDiffeTypes |

| llvm::ValueMap< const llvm::Value *, llvm::MDNode * > | differentialAliasScopeDomains |

| llvm::ValueMap< const llvm::Value *, llvm::DenseMap< ssize_t, llvm::MDNode * > > | differentialAliasScope |

| std::map< std::tuple< llvm::BasicBlock *, llvm::BasicBlock * >, llvm::BasicBlock * > | newBlocksForLoop_cache |

| This cache stores blocks we may insert as part of getReverseOrLatchMerge to handle inverse iv iteration. | |

| std::map< const llvm::BasicBlock *, llvm::BasicBlock * > | rematerializedLoops_cache |

| This cache stores a rematerialized forward pass in the loop specified. | |

| llvm::SmallVector< llvm::Instruction *, 1 > | rematerializedPrimalOrShadowAllocations |

| std::map< llvm::Instruction *, llvm::ValueMap< llvm::BasicBlock *, llvm::WeakTrackingVH > > | lcssaFixes |

| std::map< llvm::PHINode *, llvm::WeakTrackingVH > | lcssaPHIToOrig |

Public Attributes inherited from CacheUtility Public Attributes inherited from CacheUtility | |

| llvm::Function *const | newFunc |

| The function whose instructions we are caching. | |

| llvm::TargetLibraryInfo & | TLI |

| Various analysis results of newFunc. | |

| llvm::DominatorTree | DT |

| llvm::BasicBlock * | inversionAllocs |

Additional Inherited Members | |

Public Types inherited from CacheUtility Public Types inherited from CacheUtility | |

| typedef llvm::SmallVector< std::pair< llvm::Value *, llvm::SmallVector< std::pair< LoopContext, llvm::Value * >, 4 > >, 0 > | SubLimitType |

| Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader. | |

Protected Member Functions inherited from CacheUtility Protected Member Functions inherited from CacheUtility | |

| CacheUtility (llvm::TargetLibraryInfo &TLI, llvm::Function *newFunc) | |

| llvm::Value * | loadFromCachePointer (llvm::Type *T, llvm::IRBuilder<> &BuilderM, llvm::Value *cptr, llvm::Value *cache) |

| Perform the final load from the cache, applying requisite invariant group and alignment. | |

Protected Attributes inherited from CacheUtility Protected Attributes inherited from CacheUtility | |

| llvm::LoopInfo | LI |

| llvm::AssumptionCache | AC |

| MustExitScalarEvolution | SE |

| std::map< llvm::Loop *, LoopContext > | loopContexts |

| Map of Loop to requisite loop information needed for AD (forward/reverse induction/etc) | |

| std::map< llvm::Value *, std::pair< llvm::AssertingVH< llvm::AllocaInst >, LimitContext > > | scopeMap |

| A map of values being cached to their underlying allocation/limit context. | |

| std::map< llvm::AllocaInst *, llvm::SmallVector< llvm::AssertingVH< llvm::Instruction >, 4 > > | scopeInstructions |

| A map of allocations to a vector of instruction used to create by the allocation Keeping track of these values is useful for deallocation. | |

| std::map< llvm::AllocaInst *, std::set< llvm::AssertingVH< llvm::CallInst > > > | scopeFrees |

| A map of allocations to a set of instructions which free memory as part of the cache. | |

| std::map< llvm::AllocaInst *, llvm::SmallVector< llvm::AssertingVH< llvm::CallInst >, 4 > > | scopeAllocs |

| A map of allocations to a set of instructions which allocate memory as part of the cache. | |

| llvm::SmallPtrSet< llvm::LoadInst *, 10 > | CacheLookups |

Detailed Description

Definition at line 62 of file DiffeGradientUtils.h.

Member Function Documentation

◆ addToDiffe() [1/2]

| llvm::SmallVector< llvm::SelectInst *, 4 > DiffeGradientUtils::addToDiffe | ( | llvm::Value * | val, |

| llvm::Value * | dif, | ||

| llvm::IRBuilder<> & | BuilderM, | ||

| llvm::Type * | addingType, | ||

| llvm::ArrayRef< llvm::Value * > | idxs = {}, | ||

| llvm::Value * | mask = nullptr ) |

Returns created select instructions, if any.

Referenced by EnzymeGradientUtilsAddToDiffe().

◆ addToDiffe() [2/2]

| llvm::SmallVector< llvm::SelectInst *, 4 > DiffeGradientUtils::addToDiffe | ( | llvm::Value * | val, |

| llvm::Value * | dif, | ||

| llvm::IRBuilder<> & | BuilderM, | ||

| llvm::Type * | addingType, | ||

| unsigned | start, | ||

| unsigned | size, | ||

| llvm::ArrayRef< llvm::Value * > | idxs = {}, | ||

| llvm::Value * | mask = nullptr, | ||

| size_t | ignoreFirstSlicesToDiff = 0 ) |

Returns created select instructions, if any.

◆ addToInvertedPtrDiffe() [1/2]

| void DiffeGradientUtils::addToInvertedPtrDiffe | ( | llvm::Instruction * | orig, |

| llvm::Value * | origVal, | ||

| llvm::Type * | addingType, | ||

| unsigned | start, | ||

| unsigned | size, | ||

| llvm::Value * | origptr, | ||

| llvm::Value * | dif, | ||

| llvm::IRBuilder<> & | BuilderM, | ||

| llvm::MaybeAlign | align = llvm::MaybeAlign(), | ||

| llvm::Value * | mask = nullptr ) |

align is the alignment that should be specified for load/store to pointer

Referenced by EnzymeGradientUtilsAddToInvertedPointerDiffe(), and EnzymeGradientUtilsAddToInvertedPointerDiffeTT().

◆ addToInvertedPtrDiffe() [2/2]

| void DiffeGradientUtils::addToInvertedPtrDiffe | ( | llvm::Instruction * | orig, |

| llvm::Value * | origVal, | ||

| TypeTree | vd, | ||

| unsigned | size, | ||

| llvm::Value * | origptr, | ||

| llvm::Value * | prediff, | ||

| llvm::IRBuilder<> & | Builder2, | ||

| llvm::MaybeAlign | align = llvm::MaybeAlign(), | ||

| llvm::Value * | premask = nullptr ) |

◆ CreateFromClone()

|

static |

Definition at line 107 of file DiffeGradientUtils.cpp.

References TypeAnalysis::analyzeFunction(), FnTypeInfo::Arguments, PreProcessCache::CloneFunctionWithReturns(), ForwardMode, ForwardModeError, ForwardModeSplit, TypeResults::getFunction(), GradientUtils::invertedPointers, FnTypeInfo::KnownValues, GradientUtils::Logic, GradientUtils::mode, CacheUtility::newFunc, GradientUtils::oldFunc, GradientUtils::omp, EnzymeLogic::PPC, FnTypeInfo::Return, ReverseModeCombined, ReverseModeGradient, ReverseModePrimal, GradientUtils::runtimeActivity, GradientUtils::strongZero, GradientUtils::TA, CacheUtility::TLI, and GradientUtils::TR.

◆ diffe()

| Value * DiffeGradientUtils::diffe | ( | llvm::Value * | val, |

| llvm::IRBuilder<> & | BuilderM ) |

Definition at line 225 of file DiffeGradientUtils.cpp.

References ForwardMode, ForwardModeError, ForwardModeSplit, getDifferential(), GradientUtils::getShadowType(), GradientUtils::invertPointerM(), GradientUtils::isConstantValue(), GradientUtils::mode, CacheUtility::newFunc, and GradientUtils::oldFunc.

Referenced by EnzymeGradientUtilsDiffe().

◆ freeCache()

|

overridevirtual |

If an allocation is requested to be freed, this subclass will be called to chose how and where to free it.

It is by default not implemented, falling back to an error. Subclasses who want to free memory should implement this function.

Reimplemented from CacheUtility.

Definition at line 812 of file DiffeGradientUtils.cpp.

References AttemptFullUnwrapWithLookup, CreateDealloc(), FreeMemory, CacheUtility::getCacheAlignment(), getFast(), CacheUtility::newFunc, GradientUtils::reverseBlocks, CacheUtility::scopeFrees, and GradientUtils::unwrapM().

◆ getDifferential()

| AllocaInst * DiffeGradientUtils::getDifferential | ( | llvm::Value * | val | ) |

Definition at line 192 of file DiffeGradientUtils.cpp.

References differentials, ForwardMode, ForwardModeError, ForwardModeSplit, getFast(), GradientUtils::getShadowType(), CacheUtility::inversionAllocs, GradientUtils::mode, GradientUtils::oldFunc, and ZeroMemory().

Referenced by diffe(), and setDiffe().

◆ setDiffe()

| void DiffeGradientUtils::setDiffe | ( | llvm::Value * | val, |

| llvm::Value * | toset, | ||

| llvm::IRBuilder<> & | BuilderM ) |

Definition at line 769 of file DiffeGradientUtils.cpp.

References GradientUtils::erase(), ForwardMode, ForwardModeError, ForwardModeSplit, getDifferential(), GradientUtils::getShadowType(), GradientUtils::invertedPointers, GradientUtils::isConstantValue(), GradientUtils::mode, CacheUtility::newFunc, GradientUtils::oldFunc, GradientUtils::replaceAWithB(), and SanitizeDerivatives().

Referenced by EnzymeGradientUtilsSetDiffe().

Member Data Documentation

◆ differentials

| llvm::ValueMap<const llvm::Value *, llvm::TrackingVH<llvm::AllocaInst> > DiffeGradientUtils::differentials |

Definition at line 79 of file DiffeGradientUtils.h.

Referenced by getDifferential().

◆ FreeMemory

| bool DiffeGradientUtils::FreeMemory |

Whether to free memory in reverse pass or split forward.

Definition at line 77 of file DiffeGradientUtils.h.

Referenced by freeCache().

The documentation for this class was generated from the following files:

Generated on Fri May 8 2026 19:56:26 for Enzyme by