#include "MLIR/Interfaces/GradientUtils.h"

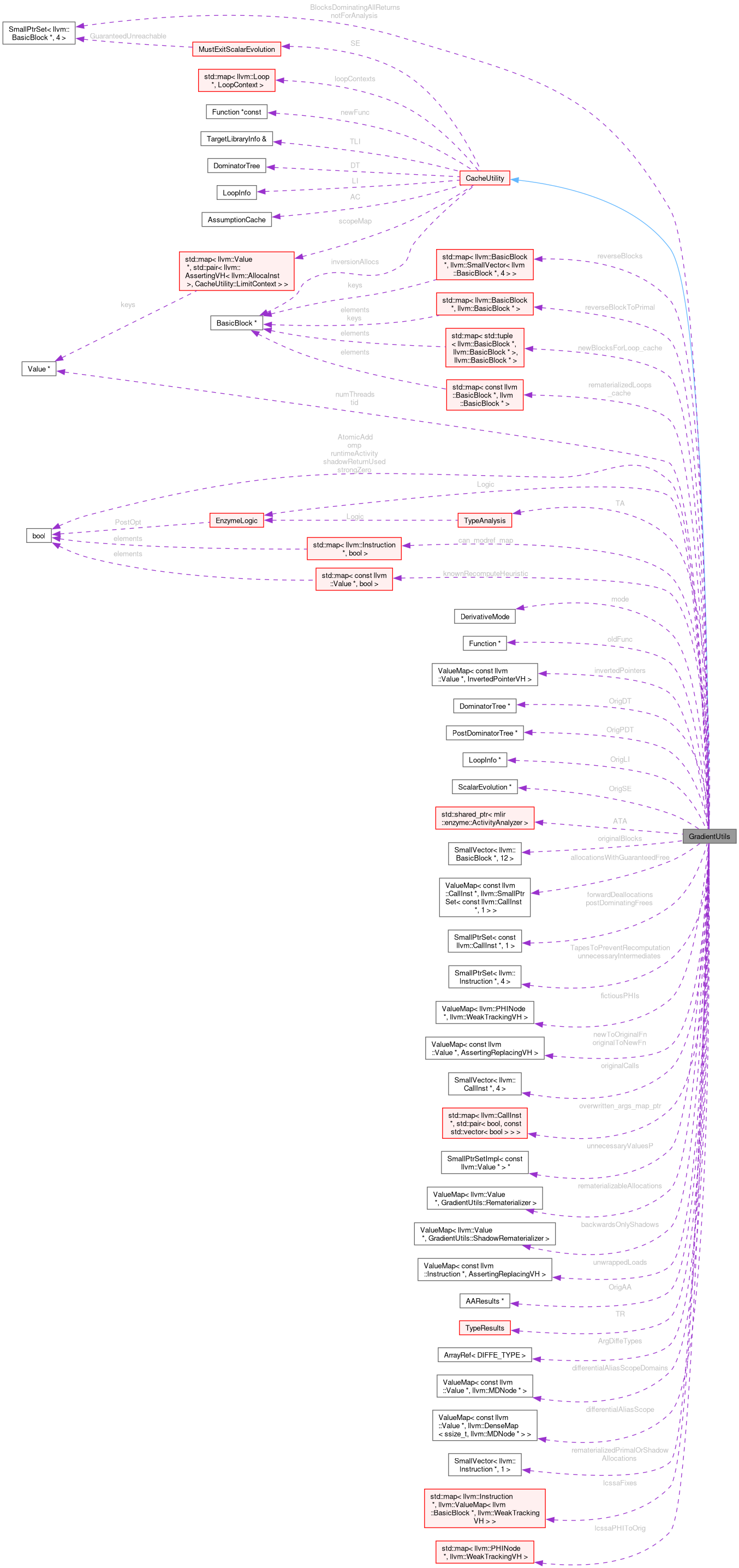

Classes | |

| struct | LoadLikeCall |

| struct | Rematerializer |

| struct | ShadowRematerializer |

Public Member Functions | |

| llvm::SmallVector< llvm::OperandBundleDef, 2 > | getInvertedBundles (llvm::CallInst *orig, llvm::ArrayRef< ValueType > types, llvm::IRBuilder<> &Builder2, bool lookup, const llvm::ValueToValueMapTy &available=llvm::ValueToValueMapTy()) |

| bool | usedInRooting (const llvm::CallBase *orig, llvm::ArrayRef< ValueType > types, const llvm::Value *val, bool shadow) const |

| llvm::Value * | getNewIfOriginal (llvm::Value *originst) const |

| llvm::Value * | ompThreadId () |

| llvm::Value * | ompNumThreads () |

| llvm::Value * | getOrInsertTotalMultiplicativeProduct (llvm::Value *val, LoopContext &lc) |

| llvm::Value * | getOrInsertConditionalIndex (llvm::Value *val, LoopContext &lc, bool pickTrue) |

| bool | assumeDynamicLoopOfSizeOne (llvm::Loop *L) const override |

| llvm::DebugLoc | getNewFromOriginal (const llvm::DebugLoc L) const |

| llvm::Value * | getNewFromOriginal (const llvm::Value *originst) const |

| llvm::Instruction * | getNewFromOriginal (const llvm::Instruction *newinst) const |

| llvm::BasicBlock * | getNewFromOriginal (const llvm::BasicBlock *newinst) const |

| llvm::Value * | hasUninverted (const llvm::Value *inverted) const |

| llvm::BasicBlock * | getOriginalFromNew (const llvm::BasicBlock *newinst) const |

| llvm::Value * | isOriginal (const llvm::Value *newinst) const |

| llvm::Instruction * | isOriginal (const llvm::Instruction *newinst) const |

| llvm::BasicBlock * | isOriginal (const llvm::BasicBlock *newinst) const |

| void | computeForwardingProperties (llvm::Instruction *V) |

| void | computeGuaranteedFrees () |

| void | replaceAndRemoveUnwrapCacheFor (llvm::Value *A, llvm::Value *B) |

| llvm::BasicBlock * | addReverseBlock (llvm::BasicBlock *currentBlock, llvm::Twine const &name, bool forkCache=true, bool push=true) |

| bool | legalRecompute (const llvm::Value *val, const llvm::ValueToValueMapTy &available, llvm::IRBuilder<> *BuilderM, bool reverse=false, bool legalRecomputeCache=true) const |

| bool | shouldRecompute (const llvm::Value *val, const llvm::ValueToValueMapTy &available, llvm::IRBuilder<> *BuilderM) |

| Given the option to recompute a value or re-use an old one, return true if it is faster to recompute this value from scratch. | |

| void | replaceAWithB (llvm::Value *A, llvm::Value *B, bool storeInCache=false) override |

| Replace this instruction both in LLVM modules and any local data-structures. | |

| void | erase (llvm::Instruction *I) override |

| Erase this instruction both from LLVM modules and any local data-structures. | |

| void | eraseWithPlaceholder (llvm::Instruction *I, llvm::Instruction *orig, const llvm::Twine &suffix="_replacementA", bool erase=true) |

| void | setTape (llvm::Value *newtape) |

| void | dumpPointers () |

| int | getIndex (std::pair< llvm::Instruction *, CacheType > idx, const std::map< std::pair< llvm::Instruction *, CacheType >, int > &mapping, llvm::IRBuilder<> &) |

| int | getIndex (std::pair< llvm::Instruction *, CacheType > idx, std::map< std::pair< llvm::Instruction *, CacheType >, int > &mapping, llvm::IRBuilder<> &) |

| llvm::Value * | cacheForReverse (llvm::IRBuilder<> &BuilderQ, llvm::Value *malloc, int idx, bool replace=true) |

| llvm::ArrayRef< llvm::WeakTrackingVH > | getTapeValues () const |

| unsigned | getWidth () |

| GradientUtils (EnzymeLogic &Logic, llvm::Function *newFunc_, llvm::Function *oldFunc_, llvm::TargetLibraryInfo &TLI_, TypeAnalysis &TA_, TypeResults TR_, llvm::ValueToValueMapTy &invertedPointers_, const llvm::SmallPtrSetImpl< llvm::Value * > &constantvalues_, const llvm::SmallPtrSetImpl< llvm::Value * > &activevals_, DIFFE_TYPE ReturnActivity, bool shadowReturnUsed, llvm::ArrayRef< DIFFE_TYPE > ArgDiffeTypes_, llvm::ValueMap< const llvm::Value *, AssertingReplacingVH > &originalToNewFn_, DerivativeMode mode, bool runtimeActivity, bool strongZero, unsigned width, bool omp) | |

| DIFFE_TYPE | getDiffeType (llvm::Value *v, bool foreignFunction) const |

| DIFFE_TYPE | getReturnDiffeType (llvm::Value *orig, bool *primalReturnUsedP, bool *shadowReturnUsedP, DerivativeMode cmode) const |

| DIFFE_TYPE | getReturnDiffeType (llvm::Value *orig, bool *primalReturnUsedP, bool *shadowReturnUsedP) const |

| llvm::MDNode * | getDerivativeAliasScope (const llvm::Value *origptr, ssize_t newptr) |

| void | setPtrDiffe (llvm::Instruction *orig, llvm::Value *ptr, llvm::Value *newval, llvm::IRBuilder<> &BuilderM, llvm::MaybeAlign align, unsigned start, unsigned size, bool isVolatile, llvm::AtomicOrdering ordering, llvm::SyncScope::ID syncScope, llvm::Value *mask, llvm::ArrayRef< llvm::Metadata * > noAlias, llvm::ArrayRef< llvm::Metadata * > scopes, bool needs_post_cache=false) |

| llvm::BasicBlock * | getReverseOrLatchMerge (llvm::BasicBlock *BB, llvm::BasicBlock *branchingBlock) |

| Given an edge from BB to branchingBlock get the corresponding block to branch to in the reverse pass. | |

| void | forceContexts () |

| void | computeMinCache () |

| bool | isOriginalBlock (const llvm::BasicBlock &BB) const |

| void | eraseFictiousPHIs () |

| void | forceActiveDetection () |

| bool | isConstantValue (llvm::Value *val) const |

| bool | isConstantInstruction (const llvm::Instruction *inst) const |

| bool | getContext (llvm::BasicBlock *BB, LoopContext &lc) |

| void | forceAugmentedReturns () |

| llvm::Value * | unwrapM (llvm::Value *const val, llvm::IRBuilder<> &BuilderM, const llvm::ValueToValueMapTy &available, UnwrapMode unwrapMode, llvm::BasicBlock *scope=nullptr, bool permitCache=true) override final |

| if full unwrap, don't just unwrap this instruction, but also its operands, etc | |

| void | ensureLookupCached (llvm::Instruction *inst, bool shouldFree=true, llvm::BasicBlock *scope=nullptr, llvm::MDNode *TBAA=nullptr) |

| llvm::Value * | fixLCSSA (llvm::Instruction *inst, llvm::BasicBlock *forwardBlock, bool legalInBlock=false) |

| llvm::Value * | lookupM (llvm::Value *val, llvm::IRBuilder<> &BuilderM, const llvm::ValueToValueMapTy &incoming_availalble=llvm::ValueToValueMapTy(), bool tryLegalRecomputeCheck=true, llvm::BasicBlock *scope=nullptr) override |

| High-level utility to get the value an instruction at a new location specified by BuilderM. | |

| llvm::Value * | invertPointerM (llvm::Value *val, llvm::IRBuilder<> &BuilderM, bool nullShadow=false) |

| void | branchToCorrespondingTarget (llvm::BasicBlock *ctx, llvm::IRBuilder<> &BuilderM, const std::map< llvm::BasicBlock *, std::vector< std::pair< llvm::BasicBlock *, llvm::BasicBlock * > > > &targetToPreds, const std::map< llvm::BasicBlock *, llvm::PHINode * > *replacePHIs=nullptr) |

| Given a map of edges we could have taken to desired target, compute a value that determines which target should be branched to. | |

| void | getReverseBuilder (llvm::IRBuilder<> &Builder2, bool original=true) |

| void | getForwardBuilder (llvm::IRBuilder<> &Builder2) |

| llvm::Type * | getShadowType (llvm::Type *ty) |

| template<typename Func , typename... Args> | |

| llvm::Value * | applyChainRule (llvm::Type *diffType, llvm::IRBuilder<> &Builder, Func rule, Args... args) |

| Unwraps a vector derivative from its internal representation and applies a function f to each element. | |

| template<typename Func , typename... Args> | |

| void | applyChainRule (llvm::IRBuilder<> &Builder, Func rule, Args... args) |

| Unwraps a vector derivative from its internal representation and applies a function f to each element. | |

| template<typename Func > | |

| llvm::Value * | applyChainRule (llvm::Type *diffType, llvm::ArrayRef< llvm::Constant * > diffs, llvm::IRBuilder<> &Builder, Func rule) |

| Unwraps an collection of constant vector derivatives from their internal representations and applies a function f to each element. | |

| bool | needsCacheWholeAllocation (const llvm::Value *V) const |

Public Member Functions inherited from CacheUtility Public Member Functions inherited from CacheUtility | |

| virtual | ~CacheUtility () |

| bool | getContext (llvm::BasicBlock *BB, LoopContext &loopContext, bool ReverseLimit) |

| Given a BasicBlock BB in newFunc, set loopContext to the relevant contained loop and return true. | |

| bool | isInstructionUsedInLoopInduction (llvm::Instruction &I) |

| Return whether the given instruction is used as necessary as part of a loop context This includes as the canonical induction variable or increment. | |

| llvm::AllocaInst * | getDynamicLoopLimit (llvm::Loop *L, bool ReverseLimit=true) |

| void | dumpScope () |

| Print out all currently cached values. | |

| unsigned | getCacheAlignment (unsigned bsize) const |

| SubLimitType | getSubLimits (bool inForwardPass, llvm::IRBuilder<> *RB, LimitContext ctx, llvm::Value *extraSize=nullptr) |

| Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader. | |

| llvm::AllocaInst * | createCacheForScope (LimitContext ctx, llvm::Type *T, llvm::StringRef name, bool shouldFree, bool allocateInternal=true, llvm::Value *extraSize=nullptr) |

| Create a cache of Type T at the given LimitContext. | |

| virtual llvm::CallInst * | freeCache (llvm::BasicBlock *forwardPreheader, const SubLimitType &antimap, int i, llvm::AllocaInst *alloc, llvm::Type *myType, llvm::ConstantInt *byteSizeOfType, llvm::Value *storeInto, llvm::MDNode *InvariantMD) |

| If an allocation is requested to be freed, this subclass will be called to chose how and where to free it. | |

| void | storeInstructionInCache (LimitContext ctx, llvm::IRBuilder<> &BuilderM, llvm::Value *val, llvm::AllocaInst *cache, llvm::MDNode *TBAA=nullptr) |

| Given an allocation defined at a particular ctx, store the value val in the cache at the location defined in the given builder. | |

| void | storeInstructionInCache (LimitContext ctx, llvm::Instruction *inst, llvm::AllocaInst *cache, llvm::MDNode *TBAA=nullptr) |

| Given an allocation defined at a particular ctx, store the instruction in the cache right after the instruction is executed. | |

| llvm::Value * | getCachePointer (llvm::Type *T, bool inForwardPass, llvm::IRBuilder<> &BuilderM, LimitContext ctx, llvm::Value *cache, bool storeInInstructionsMap, const llvm::ValueToValueMapTy &available, llvm::Value *extraSize) |

| Given an allocation specified by the LimitContext ctx and cache, compute a pointer that can hold the underlying type being cached. | |

| llvm::Value * | lookupValueFromCache (llvm::Type *T, bool inForwardPass, llvm::IRBuilder<> &BuilderM, LimitContext ctx, llvm::Value *cache, bool isi1, const llvm::ValueToValueMapTy &available, llvm::Value *extraSize=nullptr, llvm::Value *extraOffset=nullptr) |

| Given an allocation specified by the LimitContext ctx and cache, lookup the underlying cached value. | |

Static Public Member Functions | |

| static GradientUtils * | CreateFromClone (EnzymeLogic &Logic, bool runtimeActivity, bool strongZero, unsigned width, llvm::Function *todiff, llvm::TargetLibraryInfo &TLI, TypeAnalysis &TA, FnTypeInfo &oldTypeInfo, DIFFE_TYPE retType, llvm::ArrayRef< DIFFE_TYPE > constant_args, bool returnUsed, bool shadowReturnUsed, std::map< AugmentedStruct, int > &returnMapping, bool omp) |

| static llvm::Constant * | GetOrCreateShadowConstant (RequestContext context, EnzymeLogic &Logic, llvm::TargetLibraryInfo &TLI, TypeAnalysis &TA, llvm::Constant *F, DerivativeMode mode, bool runtimeActivity, bool strongZero, unsigned width, bool AtomicAdd) |

| static llvm::Constant * | GetOrCreateShadowFunction (RequestContext context, EnzymeLogic &Logic, llvm::TargetLibraryInfo &TLI, TypeAnalysis &TA, llvm::Function *F, DerivativeMode mode, bool runtimeActivity, bool strongZero, unsigned width, bool AtomicAdd) |

| static llvm::Type * | getShadowType (llvm::Type *ty, unsigned width) |

| static llvm::Value * | extractMeta (llvm::IRBuilder<> &Builder, llvm::Value *Agg, unsigned off, const llvm::Twine &name="") |

| Helper routine to extract a nested element from a struct/array. This is. | |

| static llvm::Value * | extractMeta (llvm::IRBuilder<> &Builder, llvm::Value *Agg, llvm::ArrayRef< unsigned > off, const llvm::Twine &name="", bool fallback=true) |

| Helper routine to extract a nested element from a struct/array. Unlike the. | |

| static llvm::Type * | extractMeta (llvm::Type *T, llvm::ArrayRef< unsigned > off) |

| Helper routine to get the type of an extraction. | |

| static llvm::Value * | recursiveFAdd (llvm::IRBuilder<> &B, llvm::Value *lhs, llvm::Value *rhs, llvm::ArrayRef< unsigned > lhs_off={}, llvm::ArrayRef< unsigned > rhs_off={}, llvm::Value *prev=nullptr, bool vectorLayer=false) |

Public Attributes | |

| EnzymeLogic & | Logic |

| bool | AtomicAdd |

| DerivativeMode | mode |

| llvm::Function * | oldFunc |

| llvm::ValueMap< const llvm::Value *, InvertedPointerVH > | invertedPointers |

| llvm::DominatorTree * | OrigDT |

| llvm::PostDominatorTree * | OrigPDT |

| llvm::LoopInfo * | OrigLI |

| llvm::ScalarEvolution * | OrigSE |

| llvm::SmallPtrSet< llvm::BasicBlock *, 4 > | BlocksDominatingAllReturns |

| (Original) Blocks which dominate all returns | |

| llvm::SmallPtrSet< llvm::BasicBlock *, 4 > | notForAnalysis |

| std::shared_ptr< ActivityAnalyzer > | ATA |

| llvm::SmallVector< llvm::BasicBlock *, 12 > | originalBlocks |

| llvm::ValueMap< const llvm::CallInst *, llvm::SmallPtrSet< const llvm::CallInst *, 1 > > | allocationsWithGuaranteedFree |

| Allocations which are known to always be freed before the reverse, to the list of frees that must apply to this allocation. | |

| llvm::SmallPtrSet< const llvm::CallInst *, 1 > | postDominatingFrees |

| Frees which can always be eliminated as the post dominate an allocation (which will itself be freed). | |

| llvm::SmallPtrSet< const llvm::CallInst *, 1 > | forwardDeallocations |

| Deallocations that should be kept in the forward pass because they deallocation memory which isn't necessary for the reverse pass. | |

| std::map< llvm::BasicBlock *, llvm::SmallVector< llvm::BasicBlock *, 4 > > | reverseBlocks |

| Map of primal block to corresponding block(s) in reverse. | |

| std::map< llvm::BasicBlock *, llvm::BasicBlock * > | reverseBlockToPrimal |

| Map of block in reverse to corresponding primal block. | |

| llvm::SmallPtrSet< llvm::Instruction *, 4 > | TapesToPreventRecomputation |

| A set of tape extractions to enforce a cache of rather than attempting to recompute. | |

| llvm::ValueMap< llvm::PHINode *, llvm::WeakTrackingVH > | fictiousPHIs |

| llvm::ValueMap< const llvm::Value *, AssertingReplacingVH > | originalToNewFn |

| llvm::ValueMap< const llvm::Value *, AssertingReplacingVH > | newToOriginalFn |

| llvm::SmallVector< llvm::CallInst *, 4 > | originalCalls |

| llvm::SmallPtrSet< llvm::Instruction *, 4 > | unnecessaryIntermediates |

| const std::map< llvm::Instruction *, bool > * | can_modref_map |

| const std::map< llvm::CallInst *, std::pair< bool, const std::vector< bool > > > * | overwritten_args_map_ptr |

| const llvm::SmallPtrSetImpl< const llvm::Value * > * | unnecessaryValuesP |

| llvm::Value * | tid |

| llvm::Value * | numThreads |

| llvm::ValueMap< llvm::Value *, Rematerializer > | rematerializableAllocations |

| llvm::ValueMap< llvm::Value *, ShadowRematerializer > | backwardsOnlyShadows |

| Only loaded from and stored to (not captured), mapped to the stores (and memset). | |

| std::map< const llvm::Value *, bool > | knownRecomputeHeuristic |

| llvm::ValueMap< const llvm::Instruction *, AssertingReplacingVH > | unwrappedLoads |

| llvm::AAResults * | OrigAA |

| TypeAnalysis & | TA |

| TypeResults | TR |

| bool | omp |

| bool | runtimeActivity |

| bool | strongZero |

| bool | shadowReturnUsed |

| llvm::ArrayRef< DIFFE_TYPE > | ArgDiffeTypes |

| llvm::ValueMap< const llvm::Value *, llvm::MDNode * > | differentialAliasScopeDomains |

| llvm::ValueMap< const llvm::Value *, llvm::DenseMap< ssize_t, llvm::MDNode * > > | differentialAliasScope |

| std::map< std::tuple< llvm::BasicBlock *, llvm::BasicBlock * >, llvm::BasicBlock * > | newBlocksForLoop_cache |

| This cache stores blocks we may insert as part of getReverseOrLatchMerge to handle inverse iv iteration. | |

| std::map< const llvm::BasicBlock *, llvm::BasicBlock * > | rematerializedLoops_cache |

| This cache stores a rematerialized forward pass in the loop specified. | |

| llvm::SmallVector< llvm::Instruction *, 1 > | rematerializedPrimalOrShadowAllocations |

| std::map< llvm::Instruction *, llvm::ValueMap< llvm::BasicBlock *, llvm::WeakTrackingVH > > | lcssaFixes |

| std::map< llvm::PHINode *, llvm::WeakTrackingVH > | lcssaPHIToOrig |

Public Attributes inherited from CacheUtility Public Attributes inherited from CacheUtility | |

| llvm::Function *const | newFunc |

| The function whose instructions we are caching. | |

| llvm::TargetLibraryInfo & | TLI |

| Various analysis results of newFunc. | |

| llvm::DominatorTree | DT |

| llvm::BasicBlock * | inversionAllocs |

Additional Inherited Members | |

Public Types inherited from CacheUtility Public Types inherited from CacheUtility | |

| typedef llvm::SmallVector< std::pair< llvm::Value *, llvm::SmallVector< std::pair< LoopContext, llvm::Value * >, 4 > >, 0 > | SubLimitType |

| Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader. | |

Protected Member Functions inherited from CacheUtility Protected Member Functions inherited from CacheUtility | |

| CacheUtility (llvm::TargetLibraryInfo &TLI, llvm::Function *newFunc) | |

| llvm::Value * | loadFromCachePointer (llvm::Type *T, llvm::IRBuilder<> &BuilderM, llvm::Value *cptr, llvm::Value *cache) |

| Perform the final load from the cache, applying requisite invariant group and alignment. | |

Protected Attributes inherited from CacheUtility Protected Attributes inherited from CacheUtility | |

| llvm::LoopInfo | LI |

| llvm::AssumptionCache | AC |

| MustExitScalarEvolution | SE |

| std::map< llvm::Loop *, LoopContext > | loopContexts |

| Map of Loop to requisite loop information needed for AD (forward/reverse induction/etc) | |

| std::map< llvm::Value *, std::pair< llvm::AssertingVH< llvm::AllocaInst >, LimitContext > > | scopeMap |

| A map of values being cached to their underlying allocation/limit context. | |

| std::map< llvm::AllocaInst *, llvm::SmallVector< llvm::AssertingVH< llvm::Instruction >, 4 > > | scopeInstructions |

| A map of allocations to a vector of instruction used to create by the allocation Keeping track of these values is useful for deallocation. | |

| std::map< llvm::AllocaInst *, std::set< llvm::AssertingVH< llvm::CallInst > > > | scopeFrees |

| A map of allocations to a set of instructions which free memory as part of the cache. | |

| std::map< llvm::AllocaInst *, llvm::SmallVector< llvm::AssertingVH< llvm::CallInst >, 4 > > | scopeAllocs |

| A map of allocations to a set of instructions which allocate memory as part of the cache. | |

| llvm::SmallPtrSet< llvm::LoadInst *, 10 > | CacheLookups |

Detailed Description

Definition at line 125 of file GradientUtils.h.

Constructor & Destructor Documentation

◆ GradientUtils()

| GradientUtils::GradientUtils | ( | EnzymeLogic & | Logic, |

| llvm::Function * | newFunc_, | ||

| llvm::Function * | oldFunc_, | ||

| llvm::TargetLibraryInfo & | TLI_, | ||

| TypeAnalysis & | TA_, | ||

| TypeResults | TR_, | ||

| llvm::ValueToValueMapTy & | invertedPointers_, | ||

| const llvm::SmallPtrSetImpl< llvm::Value * > & | constantvalues_, | ||

| const llvm::SmallPtrSetImpl< llvm::Value * > & | activevals_, | ||

| DIFFE_TYPE | ReturnActivity, | ||

| bool | shadowReturnUsed, | ||

| llvm::ArrayRef< DIFFE_TYPE > | ArgDiffeTypes_, | ||

| llvm::ValueMap< const llvm::Value *, AssertingReplacingVH > & | originalToNewFn_, | ||

| DerivativeMode | mode, | ||

| bool | runtimeActivity, | ||

| bool | strongZero, | ||

| unsigned | width, | ||

| bool | omp ) |

Definition at line 156 of file GradientUtils.cpp.

References mlir::enzyme::MGradientUtils::getShadowType(), and mlir::enzyme::MDiffeGradientUtils::initializationBlock.

Member Function Documentation

◆ addReverseBlock()

| BasicBlock * GradientUtils::addReverseBlock | ( | llvm::BasicBlock * | currentBlock, |

| llvm::Twine const & | name, | ||

| bool | forkCache = true, | ||

| bool | push = true ) |

Definition at line 9421 of file GradientUtils.cpp.

Referenced by EnzymeGradientUtilsAddReverseBlock(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), and AdjointGenerator::visitCommonStore().

◆ applyChainRule() [1/3]

|

inline |

Unwraps a vector derivative from its internal representation and applies a function f to each element.

Return values of f are collected and wrapped.

Definition at line 603 of file GradientUtils.h.

◆ applyChainRule() [2/3]

|

inline |

Unwraps an collection of constant vector derivatives from their internal representations and applies a function f to each element.

Definition at line 626 of file GradientUtils.h.

◆ applyChainRule() [3/3]

|

inline |

Unwraps a vector derivative from its internal representation and applies a function f to each element.

Return values of f are collected and wrapped.

Definition at line 571 of file GradientUtils.h.

◆ assumeDynamicLoopOfSizeOne()

|

overridevirtual |

Implements CacheUtility.

Definition at line 573 of file GradientUtils.cpp.

◆ branchToCorrespondingTarget()

| void GradientUtils::branchToCorrespondingTarget | ( | llvm::BasicBlock * | ctx, |

| llvm::IRBuilder<> & | BuilderM, | ||

| const std::map< llvm::BasicBlock *, std::vector< std::pair< llvm::BasicBlock *, llvm::BasicBlock * > > > & | targetToPreds, | ||

| const std::map< llvm::BasicBlock *, llvm::PHINode * > * | replacePHIs = nullptr ) |

Given a map of edges we could have taken to desired target, compute a value that determines which target should be branched to.

Definition at line 7778 of file GradientUtils.cpp.

◆ cacheForReverse()

| Value * GradientUtils::cacheForReverse | ( | llvm::IRBuilder<> & | BuilderQ, |

| llvm::Value * | malloc, | ||

| int | idx, | ||

| bool | replace = true ) |

Definition at line 2644 of file GradientUtils.cpp.

Referenced by AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitCallInst(), AdjointGenerator::visitIntrinsicInst(), AdjointGenerator::visitLoadLike(), and AdjointGenerator::visitOMPCall().

◆ computeForwardingProperties()

| void GradientUtils::computeForwardingProperties | ( | llvm::Instruction * | V | ) |

Definition at line 9096 of file GradientUtils.cpp.

◆ computeGuaranteedFrees()

| void GradientUtils::computeGuaranteedFrees | ( | ) |

Definition at line 9633 of file GradientUtils.cpp.

◆ computeMinCache()

| void GradientUtils::computeMinCache | ( | ) |

Definition at line 8336 of file GradientUtils.cpp.

◆ CreateFromClone()

|

static |

Definition at line 4391 of file GradientUtils.cpp.

◆ dumpPointers()

| void GradientUtils::dumpPointers | ( | ) |

Definition at line 9576 of file GradientUtils.cpp.

◆ ensureLookupCached()

| void GradientUtils::ensureLookupCached | ( | llvm::Instruction * | inst, |

| bool | shouldFree = true, | ||

| llvm::BasicBlock * | scope = nullptr, | ||

| llvm::MDNode * | TBAA = nullptr ) |

Definition at line 2466 of file GradientUtils.cpp.

◆ erase()

|

overridevirtual |

Erase this instruction both from LLVM modules and any local data-structures.

Reimplemented from CacheUtility.

Definition at line 9494 of file GradientUtils.cpp.

Referenced by AdjointGenerator::createBinaryOperatorDual(), EnzymeGradientUtilsErase(), AdjointGenerator::forwardModeInvertedPointerFallback(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::recursivelyHandleSubfunction(), DiffeGradientUtils::setDiffe(), AdjointGenerator::visitCallInst(), AdjointGenerator::visitLoadLike(), and AdjointGenerator::visitOMPCall().

◆ eraseFictiousPHIs()

| void GradientUtils::eraseFictiousPHIs | ( | ) |

Definition at line 8645 of file GradientUtils.cpp.

◆ eraseWithPlaceholder()

| void GradientUtils::eraseWithPlaceholder | ( | llvm::Instruction * | I, |

| llvm::Instruction * | orig, | ||

| const llvm::Twine & | suffix = "_replacementA", | ||

| bool | erase = true ) |

Definition at line 9537 of file GradientUtils.cpp.

Referenced by EnzymeGradientUtilsEraseWithPlaceholder(), and AdjointGenerator::eraseIfUnused().

◆ extractMeta() [1/3]

|

static |

Helper routine to extract a nested element from a struct/array. Unlike the.

◆ extractMeta() [2/3]

|

static |

Helper routine to extract a nested element from a struct/array. This is.

Referenced by EnzymeFixupBatchedJuliaCallingConvention(), AdjointGenerator::handleKnownCallDerivatives(), moveSRetToFromRoots(), simplifyExtractions(), simplifyLoad(), SplitPHIs(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitExtractElementInst(), AdjointGenerator::visitInsertElementInst(), and AdjointGenerator::visitShuffleVectorInst().

◆ extractMeta() [3/3]

|

static |

Helper routine to get the type of an extraction.

◆ fixLCSSA()

| Value * GradientUtils::fixLCSSA | ( | llvm::Instruction * | inst, |

| llvm::BasicBlock * | forwardBlock, | ||

| bool | legalInBlock = false ) |

Definition at line 2489 of file GradientUtils.cpp.

◆ forceActiveDetection()

| void GradientUtils::forceActiveDetection | ( | ) |

Definition at line 8683 of file GradientUtils.cpp.

◆ forceAugmentedReturns()

| void GradientUtils::forceAugmentedReturns | ( | ) |

op->getType()->isPointerTy() && !op->getType()->isIntegerTy()) {

Definition at line 8762 of file GradientUtils.cpp.

◆ forceContexts()

| void GradientUtils::forceContexts | ( | ) |

Definition at line 3921 of file GradientUtils.cpp.

◆ getContext()

| bool GradientUtils::getContext | ( | llvm::BasicBlock * | BB, |

| LoopContext & | lc ) |

Definition at line 8757 of file GradientUtils.cpp.

Referenced by AdjointGenerator::createSelectInstAdjoint(), EmitWarning(), and AdjointGenerator::visitBinaryOperator().

◆ getDerivativeAliasScope()

| MDNode * GradientUtils::getDerivativeAliasScope | ( | const llvm::Value * | origptr, |

| ssize_t | newptr ) |

Definition at line 4361 of file GradientUtils.cpp.

Referenced by AdjointGenerator::visitCommonStore(), and AdjointGenerator::visitLoadLike().

◆ getDiffeType()

| DIFFE_TYPE GradientUtils::getDiffeType | ( | llvm::Value * | v, |

| bool | foreignFunction ) const |

Definition at line 4537 of file GradientUtils.cpp.

Referenced by EnzymeGradientUtilsGetDiffeType(), AdjointGenerator::recursivelyHandleSubfunction(), and AdjointGenerator::visitOMPCall().

◆ getForwardBuilder()

| void GradientUtils::getForwardBuilder | ( | llvm::IRBuilder<> & | Builder2 | ) |

Definition at line 4956 of file GradientUtils.cpp.

◆ getIndex() [1/2]

| int GradientUtils::getIndex | ( | std::pair< llvm::Instruction *, CacheType > | idx, |

| const std::map< std::pair< llvm::Instruction *, CacheType >, int > & | mapping, | ||

| llvm::IRBuilder<> & | ) |

◆ getIndex() [2/2]

| int GradientUtils::getIndex | ( | std::pair< llvm::Instruction *, CacheType > | idx, |

| std::map< std::pair< llvm::Instruction *, CacheType >, int > & | mapping, | ||

| llvm::IRBuilder<> & | ) |

◆ getInvertedBundles()

| SmallVector< OperandBundleDef, 2 > GradientUtils::getInvertedBundles | ( | llvm::CallInst * | orig, |

| llvm::ArrayRef< ValueType > | types, | ||

| llvm::IRBuilder<> & | Builder2, | ||

| bool | lookup, | ||

| const llvm::ValueToValueMapTy & | available = llvm::ValueToValueMapTy() ) |

Definition at line 296 of file GradientUtils.cpp.

Referenced by EnzymeGradientUtilsCallWithInvertedBundles(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), AdjointGenerator::recursivelyHandleSubfunction(), and AdjointGenerator::visitMemSetCommon().

◆ getNewFromOriginal() [1/4]

| llvm::BasicBlock * GradientUtils::getNewFromOriginal | ( | const llvm::BasicBlock * | newinst | ) | const |

◆ getNewFromOriginal() [2/4]

| llvm::DebugLoc GradientUtils::getNewFromOriginal | ( | const llvm::DebugLoc | L | ) | const |

Referenced by AdjointGenerator::createBinaryOperatorAdjoint(), AdjointGenerator::createBinaryOperatorDual(), AdjointGenerator::createSelectInstAdjoint(), EnzymeGradientUtilsNewFromOriginal(), EnzymeGradientUtilsSetDebugLocFromOriginal(), AdjointGenerator::eraseIfUnused(), AdjointGenerator::handleAdjointForIntrinsic(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitAtomicRMWInst(), AdjointGenerator::visitBinaryOperator(), AdjointGenerator::visitCallInst(), AdjointGenerator::visitCastInst(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitExtractElementInst(), AdjointGenerator::visitInsertElementInst(), AdjointGenerator::visitInstruction(), AdjointGenerator::visitIntrinsicInst(), AdjointGenerator::visitLoadLike(), AdjointGenerator::visitMemSetCommon(), AdjointGenerator::visitMemTransferCommon(), AdjointGenerator::visitMemTransferInst(), and AdjointGenerator::visitOMPCall().

◆ getNewFromOriginal() [3/4]

| llvm::Instruction * GradientUtils::getNewFromOriginal | ( | const llvm::Instruction * | newinst | ) | const |

◆ getNewFromOriginal() [4/4]

| llvm::Value * GradientUtils::getNewFromOriginal | ( | const llvm::Value * | originst | ) | const |

◆ getNewIfOriginal()

| Value * GradientUtils::getNewIfOriginal | ( | llvm::Value * | originst | ) | const |

Definition at line 345 of file GradientUtils.cpp.

◆ GetOrCreateShadowConstant()

|

static |

Definition at line 4575 of file GradientUtils.cpp.

◆ GetOrCreateShadowFunction()

|

static |

Todo allow tape propagation

Definition at line 4697 of file GradientUtils.cpp.

◆ getOriginalFromNew()

| BasicBlock * GradientUtils::getOriginalFromNew | ( | const llvm::BasicBlock * | newinst | ) | const |

Definition at line 664 of file GradientUtils.cpp.

Referenced by AdjointGenerator::visitBinaryOperator().

◆ getOrInsertConditionalIndex()

| Value * GradientUtils::getOrInsertConditionalIndex | ( | llvm::Value * | val, |

| LoopContext & | lc, | ||

| bool | pickTrue ) |

Definition at line 501 of file GradientUtils.cpp.

Referenced by AdjointGenerator::createSelectInstAdjoint().

◆ getOrInsertTotalMultiplicativeProduct()

| Value * GradientUtils::getOrInsertTotalMultiplicativeProduct | ( | llvm::Value * | val, |

| LoopContext & | lc ) |

Definition at line 420 of file GradientUtils.cpp.

Referenced by AdjointGenerator::visitBinaryOperator().

◆ getReturnDiffeType() [1/2]

| DIFFE_TYPE GradientUtils::getReturnDiffeType | ( | llvm::Value * | orig, |

| bool * | primalReturnUsedP, | ||

| bool * | shadowReturnUsedP ) const |

Definition at line 4480 of file GradientUtils.cpp.

◆ getReturnDiffeType() [2/2]

| DIFFE_TYPE GradientUtils::getReturnDiffeType | ( | llvm::Value * | orig, |

| bool * | primalReturnUsedP, | ||

| bool * | shadowReturnUsedP, | ||

| DerivativeMode | cmode ) const |

Definition at line 4486 of file GradientUtils.cpp.

Referenced by EnzymeGradientUtilsGetReturnDiffeType(), AdjointGenerator::handleKnownCallDerivatives(), and AdjointGenerator::visitCallInst().

◆ getReverseBuilder()

| void GradientUtils::getReverseBuilder | ( | llvm::IRBuilder<> & | Builder2, |

| bool | original = true ) |

Definition at line 4933 of file GradientUtils.cpp.

◆ getReverseOrLatchMerge()

| BasicBlock * GradientUtils::getReverseOrLatchMerge | ( | llvm::BasicBlock * | BB, |

| llvm::BasicBlock * | branchingBlock ) |

Given an edge from BB to branchingBlock get the corresponding block to branch to in the reverse pass.

Definition at line 3776 of file GradientUtils.cpp.

◆ getShadowType() [1/2]

| llvm::Type * GradientUtils::getShadowType | ( | llvm::Type * | ty | ) |

◆ getShadowType() [2/2]

|

static |

Referenced by AdjointGenerator::createBinaryOperatorAdjoint(), AdjointGenerator::createBinaryOperatorDual(), AdjointGenerator::createSelectInstAdjoint(), DiffeGradientUtils::diffe(), EnzymeGetShadowType(), EnzymeGradientUtilsGetShadowType(), DiffeGradientUtils::getDifferential(), getFunctionTypeForClone(), AdjointGenerator::handleAdjointForIntrinsic(), AdjointGenerator::recursivelyHandleSubfunction(), DiffeGradientUtils::setDiffe(), AdjointGenerator::visitAtomicRMWInst(), AdjointGenerator::visitCallInst(), InstructionBatcher::visitCallInst(), AdjointGenerator::visitCastInst(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitExtractElementInst(), AdjointGenerator::visitExtractValueInst(), AdjointGenerator::visitInsertElementInst(), AdjointGenerator::visitInsertValueInst(), AdjointGenerator::visitInstruction(), AdjointGenerator::visitLoadLike(), and AdjointGenerator::visitShuffleVectorInst().

◆ getTapeValues()

|

inline |

Definition at line 363 of file GradientUtils.h.

◆ getWidth()

|

inline |

Definition at line 381 of file GradientUtils.h.

Referenced by EnzymeGradientUtilsGetWidth(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitExtractElementInst(), AdjointGenerator::visitInsertElementInst(), AdjointGenerator::visitLoadLike(), AdjointGenerator::visitOMPCall(), and AdjointGenerator::visitShuffleVectorInst().

◆ hasUninverted()

| Value * GradientUtils::hasUninverted | ( | const llvm::Value * | inverted | ) | const |

Definition at line 656 of file GradientUtils.cpp.

◆ invertPointerM()

| Value * GradientUtils::invertPointerM | ( | llvm::Value * | val, |

| llvm::IRBuilder<> & | BuilderM, | ||

| bool | nullShadow = false ) |

Definition at line 5338 of file GradientUtils.cpp.

Referenced by DiffeGradientUtils::diffe(), EnzymeGradientUtilsInvertPointer(), AdjointGenerator::forwardModeInvertedPointerFallback(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitAtomicRMWInst(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitLoadLike(), AdjointGenerator::visitMemSetCommon(), AdjointGenerator::visitMemTransferCommon(), and AdjointGenerator::visitOMPCall().

◆ isConstantInstruction()

| bool GradientUtils::isConstantInstruction | ( | const llvm::Instruction * | inst | ) | const |

Definition at line 8752 of file GradientUtils.cpp.

Referenced by AdjointGenerator::createBinaryOperatorAdjoint(), AdjointGenerator::createBinaryOperatorDual(), AdjointGenerator::createSelectInstAdjoint(), EnzymeGradientUtilsIsConstantInstruction(), AdjointGenerator::handleAdjointForIntrinsic(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitAtomicRMWInst(), AdjointGenerator::visitBinaryOperator(), AdjointGenerator::visitCallInst(), AdjointGenerator::visitCastInst(), AdjointGenerator::visitExtractElementInst(), AdjointGenerator::visitExtractValueInst(), AdjointGenerator::visitInsertElementInst(), AdjointGenerator::visitInstruction(), AdjointGenerator::visitLoadLike(), AdjointGenerator::visitSelectInst(), and AdjointGenerator::visitShuffleVectorInst().

◆ isConstantValue()

| bool GradientUtils::isConstantValue | ( | llvm::Value * | val | ) | const |

Functions must be false so we can replace function with augmentation, fallback to analysis

Definition at line 8702 of file GradientUtils.cpp.

Referenced by DifferentialUseAnalysis::__attribute__(), AdjointGenerator::createBinaryOperatorAdjoint(), AdjointGenerator::createBinaryOperatorDual(), AdjointGenerator::createSelectInstAdjoint(), DiffeGradientUtils::diffe(), EnzymeGradientUtilsIsConstantValue(), AdjointGenerator::forwardModeInvertedPointerFallback(), AdjointGenerator::handleAdjointForIntrinsic(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), DifferentialUseAnalysis::is_value_needed_in_reverse(), AdjointGenerator::recursivelyHandleSubfunction(), DiffeGradientUtils::setDiffe(), AdjointGenerator::visitAtomicRMWInst(), AdjointGenerator::visitBinaryOperator(), AdjointGenerator::visitCallInst(), AdjointGenerator::visitCastInst(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitExtractElementInst(), AdjointGenerator::visitExtractValueInst(), AdjointGenerator::visitInsertElementInst(), AdjointGenerator::visitInsertValueInst(), AdjointGenerator::visitInstruction(), AdjointGenerator::visitLoadLike(), AdjointGenerator::visitMemSetCommon(), AdjointGenerator::visitMemTransferCommon(), and AdjointGenerator::visitShuffleVectorInst().

◆ isOriginal() [1/3]

| llvm::BasicBlock * GradientUtils::isOriginal | ( | const llvm::BasicBlock * | newinst | ) | const |

◆ isOriginal() [2/3]

| llvm::Instruction * GradientUtils::isOriginal | ( | const llvm::Instruction * | newinst | ) | const |

◆ isOriginal() [3/3]

| llvm::Value * GradientUtils::isOriginal | ( | const llvm::Value * | newinst | ) | const |

Referenced by AdjointGenerator::recursivelyHandleSubfunction().

◆ isOriginalBlock()

| bool GradientUtils::isOriginalBlock | ( | const llvm::BasicBlock & | BB | ) | const |

Definition at line 8637 of file GradientUtils.cpp.

◆ legalRecompute()

| bool GradientUtils::legalRecompute | ( | const llvm::Value * | val, |

| const llvm::ValueToValueMapTy & | available, | ||

| llvm::IRBuilder<> * | BuilderM, | ||

| bool | reverse = false, | ||

| bool | legalRecomputeCache = true ) const |

Definition at line 3928 of file GradientUtils.cpp.

Referenced by AdjointGenerator::handleKnownCallDerivatives(), and AdjointGenerator::visitCallInst().

◆ lookupM()

|

overridevirtual |

High-level utility to get the value an instruction at a new location specified by BuilderM.

Unlike unwrap, this function can never fail – falling back to creating a cache if necessary. This function is prepopulated with a set of values that are already known to be available and may contain optimizations for getting the value in more efficient ways (e.g. unwrap'ing when legal, looking up equivalent values, etc). This high-level utility should be implemented based off the low-level caching infrastructure provided in this class.

Implements CacheUtility.

Definition at line 6601 of file GradientUtils.cpp.

Referenced by AdjointGenerator::createSelectInstAdjoint(), EnzymeGradientUtilsLookup(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::lookup(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitCallInst(), and AdjointGenerator::visitMemSetCommon().

◆ needsCacheWholeAllocation()

| bool GradientUtils::needsCacheWholeAllocation | ( | const llvm::Value * | V | ) | const |

Definition at line 9912 of file GradientUtils.cpp.

Referenced by DifferentialUseAnalysis::is_value_needed_in_reverse().

◆ ompNumThreads()

| Value * GradientUtils::ompNumThreads | ( | ) |

Definition at line 390 of file GradientUtils.cpp.

◆ ompThreadId()

| Value * GradientUtils::ompThreadId | ( | ) |

Definition at line 361 of file GradientUtils.cpp.

◆ recursiveFAdd()

|

static |

Definition at line 5259 of file GradientUtils.cpp.

◆ replaceAndRemoveUnwrapCacheFor()

| void GradientUtils::replaceAndRemoveUnwrapCacheFor | ( | llvm::Value * | A, |

| llvm::Value * | B ) |

Definition at line 10035 of file GradientUtils.cpp.

Referenced by AdjointGenerator::recursivelyHandleSubfunction().

◆ replaceAWithB()

|

overridevirtual |

Replace this instruction both in LLVM modules and any local data-structures.

Reimplemented from CacheUtility.

Definition at line 9466 of file GradientUtils.cpp.

Referenced by AdjointGenerator::createBinaryOperatorDual(), EnzymeGradientUtilsReplaceAWithB(), AdjointGenerator::forwardModeInvertedPointerFallback(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::recursivelyHandleSubfunction(), DiffeGradientUtils::setDiffe(), and AdjointGenerator::visitCallInst().

◆ setPtrDiffe()

| void GradientUtils::setPtrDiffe | ( | llvm::Instruction * | orig, |

| llvm::Value * | ptr, | ||

| llvm::Value * | newval, | ||

| llvm::IRBuilder<> & | BuilderM, | ||

| llvm::MaybeAlign | align, | ||

| unsigned | start, | ||

| unsigned | size, | ||

| bool | isVolatile, | ||

| llvm::AtomicOrdering | ordering, | ||

| llvm::SyncScope::ID | syncScope, | ||

| llvm::Value * | mask, | ||

| llvm::ArrayRef< llvm::Metadata * > | noAlias, | ||

| llvm::ArrayRef< llvm::Metadata * > | scopes, | ||

| bool | needs_post_cache = false ) |

Definition at line 4968 of file GradientUtils.cpp.

Referenced by AdjointGenerator::visitCommonStore().

◆ setTape()

| void GradientUtils::setTape | ( | llvm::Value * | newtape | ) |

Definition at line 9568 of file GradientUtils.cpp.

◆ shouldRecompute()

| bool GradientUtils::shouldRecompute | ( | const llvm::Value * | val, |

| const llvm::ValueToValueMapTy & | available, | ||

| llvm::IRBuilder<> * | BuilderM ) |

Given the option to recompute a value or re-use an old one, return true if it is faster to recompute this value from scratch.

Definition at line 4218 of file GradientUtils.cpp.

◆ unwrapM()

|

finaloverridevirtual |

if full unwrap, don't just unwrap this instruction, but also its operands, etc

Implements CacheUtility.

Definition at line 697 of file GradientUtils.cpp.

Referenced by DiffeGradientUtils::freeCache(), and AdjointGenerator::visitOMPCall().

◆ usedInRooting()

| bool GradientUtils::usedInRooting | ( | const llvm::CallBase * | orig, |

| llvm::ArrayRef< ValueType > | types, | ||

| const llvm::Value * | val, | ||

| bool | shadow ) const |

Definition at line 256 of file GradientUtils.cpp.

References ForwardMode, ForwardModeSplit, and getShadowType().

Member Data Documentation

◆ allocationsWithGuaranteedFree

| llvm::ValueMap<const llvm::CallInst *, llvm::SmallPtrSet<const llvm::CallInst *, 1> > GradientUtils::allocationsWithGuaranteedFree |

Allocations which are known to always be freed before the reverse, to the list of frees that must apply to this allocation.

Definition at line 148 of file GradientUtils.h.

◆ ArgDiffeTypes

| llvm::ArrayRef<DIFFE_TYPE> GradientUtils::ArgDiffeTypes |

Definition at line 385 of file GradientUtils.h.

◆ ATA

| std::shared_ptr<ActivityAnalyzer> GradientUtils::ATA |

Definition at line 141 of file GradientUtils.h.

◆ AtomicAdd

| bool GradientUtils::AtomicAdd |

Definition at line 128 of file GradientUtils.h.

Referenced by EnzymeGradientUtilsGetAtomicAdd(), and AdjointGenerator::recursivelyHandleSubfunction().

◆ backwardsOnlyShadows

| llvm::ValueMap<llvm::Value *, ShadowRematerializer> GradientUtils::backwardsOnlyShadows |

Only loaded from and stored to (not captured), mapped to the stores (and memset).

Boolean denotes whether the primal initializes the shadow as well (for use) as a structure which carries data.

Definition at line 297 of file GradientUtils.h.

Referenced by AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitMemSetCommon(), and AdjointGenerator::visitMemTransferCommon().

◆ BlocksDominatingAllReturns

| llvm::SmallPtrSet<llvm::BasicBlock *, 4> GradientUtils::BlocksDominatingAllReturns |

(Original) Blocks which dominate all returns

Definition at line 138 of file GradientUtils.h.

◆ can_modref_map

| const std::map<llvm::Instruction *, bool>* GradientUtils::can_modref_map |

Definition at line 176 of file GradientUtils.h.

Referenced by AdjointGenerator::visitLoadLike().

◆ differentialAliasScope

| llvm::ValueMap<const llvm::Value *, llvm::DenseMap<ssize_t, llvm::MDNode *> > GradientUtils::differentialAliasScope |

Definition at line 423 of file GradientUtils.h.

◆ differentialAliasScopeDomains

| llvm::ValueMap<const llvm::Value *, llvm::MDNode *> GradientUtils::differentialAliasScopeDomains |

Definition at line 421 of file GradientUtils.h.

◆ fictiousPHIs

| llvm::ValueMap<llvm::PHINode *, llvm::WeakTrackingVH> GradientUtils::fictiousPHIs |

Definition at line 169 of file GradientUtils.h.

Referenced by AdjointGenerator::recursivelyHandleSubfunction().

◆ forwardDeallocations

| llvm::SmallPtrSet<const llvm::CallInst *, 1> GradientUtils::forwardDeallocations |

Deallocations that should be kept in the forward pass because they deallocation memory which isn't necessary for the reverse pass.

Definition at line 157 of file GradientUtils.h.

◆ invertedPointers

| llvm::ValueMap<const llvm::Value *, InvertedPointerVH> GradientUtils::invertedPointers |

Definition at line 131 of file GradientUtils.h.

Referenced by AdjointGenerator::createBinaryOperatorDual(), DiffeGradientUtils::CreateFromClone(), EnzymeGradientUtilsInvertedPointersToString(), AdjointGenerator::forwardModeInvertedPointerFallback(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::recursivelyHandleSubfunction(), DiffeGradientUtils::setDiffe(), AdjointGenerator::visitCallInst(), and AdjointGenerator::visitLoadLike().

◆ knownRecomputeHeuristic

| std::map<const llvm::Value *, bool> GradientUtils::knownRecomputeHeuristic |

Definition at line 327 of file GradientUtils.h.

Referenced by AdjointGenerator::eraseIfUnused(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitCallInst(), AdjointGenerator::visitIntrinsicInst(), and AdjointGenerator::visitLoadLike().

◆ lcssaFixes

| std::map<llvm::Instruction *, llvm::ValueMap<llvm::BasicBlock *, llvm::WeakTrackingVH> > GradientUtils::lcssaFixes |

Definition at line 502 of file GradientUtils.h.

◆ lcssaPHIToOrig

| std::map<llvm::PHINode *, llvm::WeakTrackingVH> GradientUtils::lcssaPHIToOrig |

Definition at line 503 of file GradientUtils.h.

◆ Logic

| EnzymeLogic& GradientUtils::Logic |

Definition at line 127 of file GradientUtils.h.

Referenced by DiffeGradientUtils::CreateFromClone(), EnzymeGradientUtilsGetExternalContext(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitCallInst(), and AdjointGenerator::visitOMPCall().

◆ mode

| DerivativeMode GradientUtils::mode |

Definition at line 129 of file GradientUtils.h.

Referenced by DifferentialUseAnalysis::__attribute__(), DiffeGradientUtils::CreateFromClone(), DiffeGradientUtils::diffe(), EnzymeGradientUtilsGetMode(), EnzymeGradientUtilsGetUncacheableArgs(), DiffeGradientUtils::getDifferential(), DifferentialUseAnalysis::is_value_needed_in_reverse(), and DiffeGradientUtils::setDiffe().

◆ newBlocksForLoop_cache

| std::map<std::tuple<llvm::BasicBlock *, llvm::BasicBlock *>, llvm::BasicBlock *> GradientUtils::newBlocksForLoop_cache |

This cache stores blocks we may insert as part of getReverseOrLatchMerge to handle inverse iv iteration.

Definition at line 445 of file GradientUtils.h.

◆ newToOriginalFn

| llvm::ValueMap<const llvm::Value *, AssertingReplacingVH> GradientUtils::newToOriginalFn |

Definition at line 171 of file GradientUtils.h.

Referenced by EnzymeReplaceOriginalToNew(), and AdjointGenerator::recursivelyHandleSubfunction().

◆ notForAnalysis

| llvm::SmallPtrSet<llvm::BasicBlock *, 4> GradientUtils::notForAnalysis |

Definition at line 140 of file GradientUtils.h.

Referenced by DifferentialUseAnalysis::__attribute__().

◆ numThreads

| llvm::Value* GradientUtils::numThreads |

Definition at line 195 of file GradientUtils.h.

◆ oldFunc

| llvm::Function* GradientUtils::oldFunc |

Definition at line 130 of file GradientUtils.h.

Referenced by AdjointGenerator::AdjointGenerator(), calculateUnusedStores(), calculateUnusedValues(), AdjointGenerator::createBinaryOperatorAdjoint(), AdjointGenerator::createBinaryOperatorDual(), DiffeGradientUtils::CreateFromClone(), DiffeGradientUtils::diffe(), EnzymeGradientUtilsGetUncacheableArgs(), DiffeGradientUtils::getDifferential(), AdjointGenerator::handleAdjointForIntrinsic(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), DifferentialUseAnalysis::is_value_needed_in_reverse(), AdjointGenerator::recursivelyHandleSubfunction(), DiffeGradientUtils::setDiffe(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitExtractValueInst(), and AdjointGenerator::visitOMPCall().

◆ omp

| bool GradientUtils::omp |

Definition at line 371 of file GradientUtils.h.

Referenced by DiffeGradientUtils::CreateFromClone().

◆ OrigAA

| llvm::AAResults* GradientUtils::OrigAA |

Definition at line 368 of file GradientUtils.h.

◆ OrigDT

| llvm::DominatorTree* GradientUtils::OrigDT |

Definition at line 132 of file GradientUtils.h.

◆ originalBlocks

| llvm::SmallVector<llvm::BasicBlock *, 12> GradientUtils::originalBlocks |

Definition at line 142 of file GradientUtils.h.

◆ originalCalls

| llvm::SmallVector<llvm::CallInst *, 4> GradientUtils::originalCalls |

Definition at line 172 of file GradientUtils.h.

◆ originalToNewFn

| llvm::ValueMap<const llvm::Value *, AssertingReplacingVH> GradientUtils::originalToNewFn |

Definition at line 170 of file GradientUtils.h.

Referenced by EnzymeReplaceOriginalToNew(), and AdjointGenerator::recursivelyHandleSubfunction().

◆ OrigLI

| llvm::LoopInfo* GradientUtils::OrigLI |

Definition at line 134 of file GradientUtils.h.

Referenced by AdjointGenerator::createSelectInstAdjoint(), and AdjointGenerator::visitBinaryOperator().

◆ OrigPDT

| llvm::PostDominatorTree* GradientUtils::OrigPDT |

Definition at line 133 of file GradientUtils.h.

◆ OrigSE

| llvm::ScalarEvolution* GradientUtils::OrigSE |

Definition at line 135 of file GradientUtils.h.

◆ overwritten_args_map_ptr

| const std::map<llvm::CallInst *, std::pair<bool, const std::vector<bool> > >* GradientUtils::overwritten_args_map_ptr |

Definition at line 178 of file GradientUtils.h.

Referenced by EnzymeGradientUtilsGetUncacheableArgs().

◆ postDominatingFrees

| llvm::SmallPtrSet<const llvm::CallInst *, 1> GradientUtils::postDominatingFrees |

Frees which can always be eliminated as the post dominate an allocation (which will itself be freed).

Definition at line 152 of file GradientUtils.h.

◆ rematerializableAllocations

| llvm::ValueMap<llvm::Value *, Rematerializer> GradientUtils::rematerializableAllocations |

Definition at line 292 of file GradientUtils.h.

Referenced by AdjointGenerator::handleKnownCallDerivatives(), DifferentialUseAnalysis::is_value_needed_in_reverse(), AdjointGenerator::visitMemSetCommon(), and AdjointGenerator::visitStoreInst().

◆ rematerializedLoops_cache

| std::map<const llvm::BasicBlock *, llvm::BasicBlock *> GradientUtils::rematerializedLoops_cache |

This cache stores a rematerialized forward pass in the loop specified.

The key is the loop header.

Definition at line 450 of file GradientUtils.h.

◆ rematerializedPrimalOrShadowAllocations

| llvm::SmallVector<llvm::Instruction *, 1> GradientUtils::rematerializedPrimalOrShadowAllocations |

Definition at line 469 of file GradientUtils.h.

Referenced by AdjointGenerator::handleKnownCallDerivatives().

◆ reverseBlocks

| std::map<llvm::BasicBlock *, llvm::SmallVector<llvm::BasicBlock *, 4> > GradientUtils::reverseBlocks |

Map of primal block to corresponding block(s) in reverse.

Definition at line 161 of file GradientUtils.h.

Referenced by EnzymeGradientUtilsSetReverseBlock(), DiffeGradientUtils::freeCache(), AdjointGenerator::handleKnownCallDerivatives(), and AdjointGenerator::handleMPI().

◆ reverseBlockToPrimal

| std::map<llvm::BasicBlock *, llvm::BasicBlock *> GradientUtils::reverseBlockToPrimal |

Map of block in reverse to corresponding primal block.

Definition at line 163 of file GradientUtils.h.

Referenced by EnzymeGradientUtilsSetReverseBlock(), AdjointGenerator::handleKnownCallDerivatives(), and AdjointGenerator::handleMPI().

◆ runtimeActivity

| bool GradientUtils::runtimeActivity |

Definition at line 372 of file GradientUtils.h.

Referenced by DiffeGradientUtils::CreateFromClone(), EnzymeGradientUtilsGetRuntimeActivity(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitLoadLike(), AdjointGenerator::visitMemTransferCommon(), and AdjointGenerator::visitOMPCall().

◆ shadowReturnUsed

| bool GradientUtils::shadowReturnUsed |

Definition at line 383 of file GradientUtils.h.

◆ strongZero

| bool GradientUtils::strongZero |

Definition at line 375 of file GradientUtils.h.

Referenced by AdjointGenerator::createBinaryOperatorAdjoint(), AdjointGenerator::createBinaryOperatorDual(), DiffeGradientUtils::CreateFromClone(), EnzymeGradientUtilsGetStrongZero(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitBinaryOperator(), and AdjointGenerator::visitOMPCall().

◆ TA

| TypeAnalysis& GradientUtils::TA |

Definition at line 369 of file GradientUtils.h.

Referenced by DiffeGradientUtils::CreateFromClone().

◆ TapesToPreventRecomputation

| llvm::SmallPtrSet<llvm::Instruction *, 4> GradientUtils::TapesToPreventRecomputation |

A set of tape extractions to enforce a cache of rather than attempting to recompute.

Definition at line 167 of file GradientUtils.h.

Referenced by AdjointGenerator::handleMPI(), and AdjointGenerator::recursivelyHandleSubfunction().

◆ tid

| llvm::Value* GradientUtils::tid |

Definition at line 192 of file GradientUtils.h.

◆ TR

| TypeResults GradientUtils::TR |

Definition at line 370 of file GradientUtils.h.

Referenced by DiffeGradientUtils::CreateFromClone(), EmitNoDerivativeError(), EmitNoTypeError(), EnzymeGradientUtilsAllocAndGetTypeTree(), EnzymeGradientUtilsDumpTypeResults(), EnzymeGradientUtilsTypeAnalyzer(), and DifferentialUseAnalysis::is_value_needed_in_reverse().

◆ unnecessaryIntermediates

| llvm::SmallPtrSet<llvm::Instruction *, 4> GradientUtils::unnecessaryIntermediates |

Definition at line 174 of file GradientUtils.h.

Referenced by AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::recursivelyHandleSubfunction(), AdjointGenerator::visitCallInst(), and AdjointGenerator::visitLoadLike().

◆ unnecessaryValuesP

| const llvm::SmallPtrSetImpl<const llvm::Value *>* GradientUtils::unnecessaryValuesP |

Definition at line 179 of file GradientUtils.h.

◆ unwrappedLoads

| llvm::ValueMap<const llvm::Instruction *, AssertingReplacingVH> GradientUtils::unwrappedLoads |

Definition at line 333 of file GradientUtils.h.

Referenced by AdjointGenerator::visitOMPCall().

The documentation for this class was generated from the following files:

- MLIR/Interfaces/GradientUtils.h

- MLIR/Interfaces/GradientUtils.cpp

Generated on Fri May 8 2026 19:56:26 for Enzyme by