#include "CacheUtility.h"

Classes | |

| struct | LimitContext |

Public Types | |

| typedef llvm::SmallVector< std::pair< llvm::Value *, llvm::SmallVector< std::pair< LoopContext, llvm::Value * >, 4 > >, 0 > | SubLimitType |

| Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader. | |

Public Member Functions | |

| virtual | ~CacheUtility () |

| bool | getContext (llvm::BasicBlock *BB, LoopContext &loopContext, bool ReverseLimit) |

| Given a BasicBlock BB in newFunc, set loopContext to the relevant contained loop and return true. | |

| bool | isInstructionUsedInLoopInduction (llvm::Instruction &I) |

| Return whether the given instruction is used as necessary as part of a loop context This includes as the canonical induction variable or increment. | |

| llvm::AllocaInst * | getDynamicLoopLimit (llvm::Loop *L, bool ReverseLimit=true) |

| void | dumpScope () |

| Print out all currently cached values. | |

| unsigned | getCacheAlignment (unsigned bsize) const |

| virtual void | erase (llvm::Instruction *I) |

| Erase this instruction both from LLVM modules and any local data-structures. | |

| virtual void | replaceAWithB (llvm::Value *A, llvm::Value *B, bool storeInCache=false) |

| Replace this instruction both in LLVM modules and any local data-structures. | |

| SubLimitType | getSubLimits (bool inForwardPass, llvm::IRBuilder<> *RB, LimitContext ctx, llvm::Value *extraSize=nullptr) |

| Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader. | |

| llvm::AllocaInst * | createCacheForScope (LimitContext ctx, llvm::Type *T, llvm::StringRef name, bool shouldFree, bool allocateInternal=true, llvm::Value *extraSize=nullptr) |

| Create a cache of Type T at the given LimitContext. | |

| virtual llvm::Value * | unwrapM (llvm::Value *const val, llvm::IRBuilder<> &BuilderM, const llvm::ValueToValueMapTy &available, UnwrapMode mode, llvm::BasicBlock *scope=nullptr, bool permitCache=true)=0 |

| High-level utility to "unwrap" an instruction at a new location specified by BuilderM. | |

| virtual llvm::Value * | lookupM (llvm::Value *val, llvm::IRBuilder<> &BuilderM, const llvm::ValueToValueMapTy &incoming_availalble=llvm::ValueToValueMapTy(), bool tryLegalityCheck=true, llvm::BasicBlock *scope=nullptr)=0 |

| High-level utility to get the value an instruction at a new location specified by BuilderM. | |

| virtual bool | assumeDynamicLoopOfSizeOne (llvm::Loop *L) const =0 |

| virtual llvm::CallInst * | freeCache (llvm::BasicBlock *forwardPreheader, const SubLimitType &antimap, int i, llvm::AllocaInst *alloc, llvm::Type *myType, llvm::ConstantInt *byteSizeOfType, llvm::Value *storeInto, llvm::MDNode *InvariantMD) |

| If an allocation is requested to be freed, this subclass will be called to chose how and where to free it. | |

| void | storeInstructionInCache (LimitContext ctx, llvm::IRBuilder<> &BuilderM, llvm::Value *val, llvm::AllocaInst *cache, llvm::MDNode *TBAA=nullptr) |

| Given an allocation defined at a particular ctx, store the value val in the cache at the location defined in the given builder. | |

| void | storeInstructionInCache (LimitContext ctx, llvm::Instruction *inst, llvm::AllocaInst *cache, llvm::MDNode *TBAA=nullptr) |

| Given an allocation defined at a particular ctx, store the instruction in the cache right after the instruction is executed. | |

| llvm::Value * | getCachePointer (llvm::Type *T, bool inForwardPass, llvm::IRBuilder<> &BuilderM, LimitContext ctx, llvm::Value *cache, bool storeInInstructionsMap, const llvm::ValueToValueMapTy &available, llvm::Value *extraSize) |

| Given an allocation specified by the LimitContext ctx and cache, compute a pointer that can hold the underlying type being cached. | |

| llvm::Value * | lookupValueFromCache (llvm::Type *T, bool inForwardPass, llvm::IRBuilder<> &BuilderM, LimitContext ctx, llvm::Value *cache, bool isi1, const llvm::ValueToValueMapTy &available, llvm::Value *extraSize=nullptr, llvm::Value *extraOffset=nullptr) |

| Given an allocation specified by the LimitContext ctx and cache, lookup the underlying cached value. | |

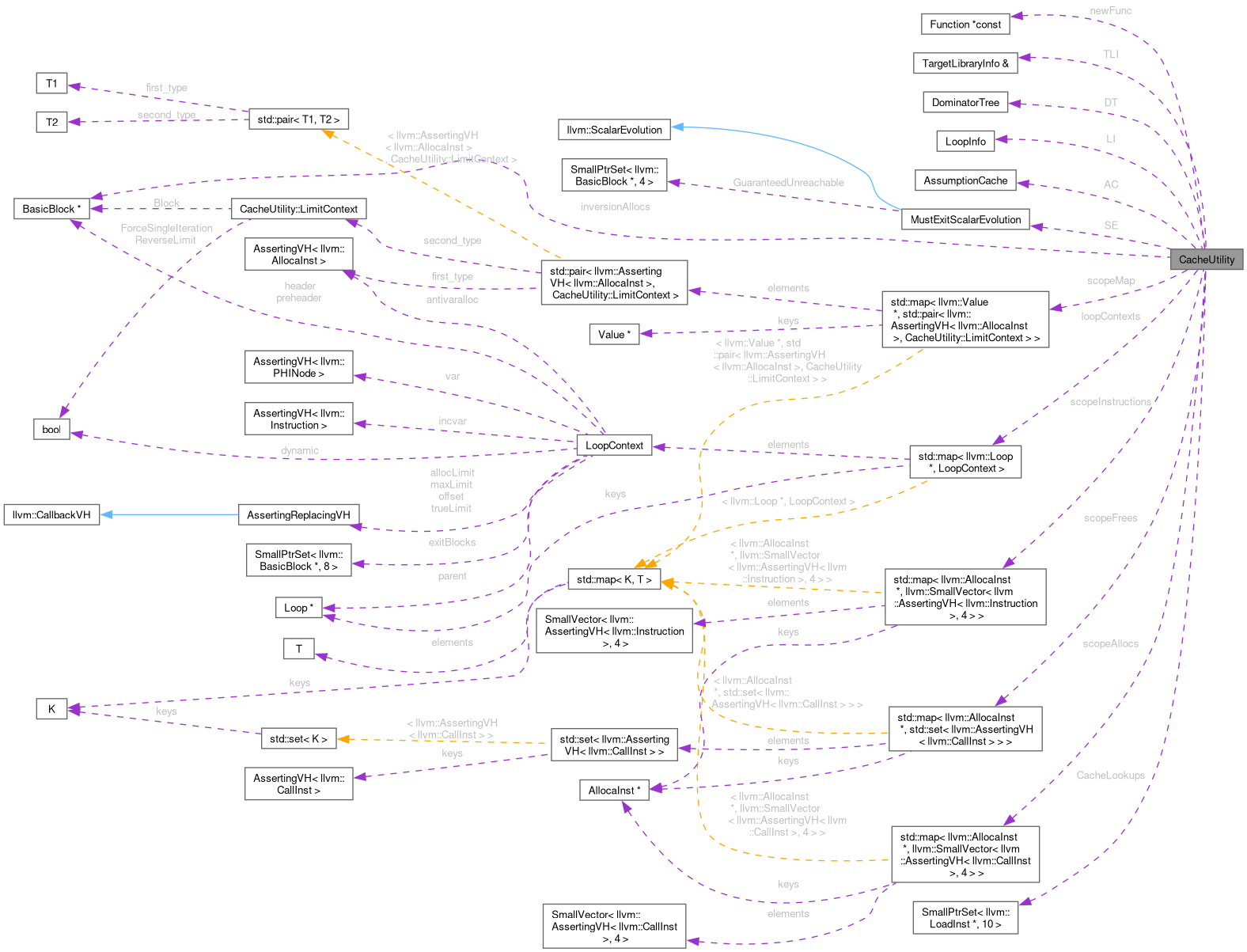

Public Attributes | |

| llvm::Function *const | newFunc |

| The function whose instructions we are caching. | |

| llvm::TargetLibraryInfo & | TLI |

| Various analysis results of newFunc. | |

| llvm::DominatorTree | DT |

| llvm::BasicBlock * | inversionAllocs |

Protected Member Functions | |

| CacheUtility (llvm::TargetLibraryInfo &TLI, llvm::Function *newFunc) | |

| llvm::Value * | loadFromCachePointer (llvm::Type *T, llvm::IRBuilder<> &BuilderM, llvm::Value *cptr, llvm::Value *cache) |

| Perform the final load from the cache, applying requisite invariant group and alignment. | |

Protected Attributes | |

| llvm::LoopInfo | LI |

| llvm::AssumptionCache | AC |

| MustExitScalarEvolution | SE |

| std::map< llvm::Loop *, LoopContext > | loopContexts |

| Map of Loop to requisite loop information needed for AD (forward/reverse induction/etc) | |

| std::map< llvm::Value *, std::pair< llvm::AssertingVH< llvm::AllocaInst >, LimitContext > > | scopeMap |

| A map of values being cached to their underlying allocation/limit context. | |

| std::map< llvm::AllocaInst *, llvm::SmallVector< llvm::AssertingVH< llvm::Instruction >, 4 > > | scopeInstructions |

| A map of allocations to a vector of instruction used to create by the allocation Keeping track of these values is useful for deallocation. | |

| std::map< llvm::AllocaInst *, std::set< llvm::AssertingVH< llvm::CallInst > > > | scopeFrees |

| A map of allocations to a set of instructions which free memory as part of the cache. | |

| std::map< llvm::AllocaInst *, llvm::SmallVector< llvm::AssertingVH< llvm::CallInst >, 4 > > | scopeAllocs |

| A map of allocations to a set of instructions which allocate memory as part of the cache. | |

| llvm::SmallPtrSet< llvm::LoadInst *, 10 > | CacheLookups |

Detailed Description

Definition at line 144 of file CacheUtility.h.

Member Typedef Documentation

◆ SubLimitType

| llvm::SmallVector<std::pair< llvm::Value *, llvm::SmallVector< std::pair<LoopContext, llvm::Value *>, 4> >, 0> CacheUtility::SubLimitType |

Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader.

Every dynamic loop defines the start of a chunk. SubLimitType is a vector of chunk objects. More specifically it is a vector of { # iters in a Chunk (sublimit), Chunk } Each chunk object is a vector of loops contained within the chunk. For every loop, this returns pair of the LoopContext and the limit of that loop Both the vector of Chunks and vector of Loops within a Chunk go from innermost loop to outermost loop.

Definition at line 264 of file CacheUtility.h.

Constructor & Destructor Documentation

◆ CacheUtility()

|

inlineprotected |

Definition at line 164 of file CacheUtility.h.

References inversionAllocs, and newFunc.

◆ ~CacheUtility()

|

virtual |

Definition at line 52 of file CacheUtility.cpp.

Member Function Documentation

◆ assumeDynamicLoopOfSizeOne()

|

pure virtual |

Implemented in GradientUtils.

Referenced by getContext().

◆ createCacheForScope()

| AllocaInst * CacheUtility::createCacheForScope | ( | LimitContext | ctx, |

| llvm::Type * | T, | ||

| llvm::StringRef | name, | ||

| bool | shouldFree, | ||

| bool | allocateInternal = true, | ||

| llvm::Value * | extraSize = nullptr ) |

Create a cache of Type T at the given LimitContext.

Caching mechanism: creates a cache of type T in a scope given by ctx (where if ctx is in a loop there will be a corresponding number of slots)

If allocateInternal is set this will allocate the requesite memory. If extraSize is set, allocations will be a factor of extraSize larger

Definition at line 789 of file CacheUtility.cpp.

References AttemptFullUnwrapWithLookup, CacheUtility::LimitContext::Block, CreateAllocation(), CreateReAllocation(), EfficientBoolCache, EnzymeZeroCache, freeCache(), getCacheAlignment(), getFast(), getSubLimits(), getUndefinedValueForType(), inversionAllocs, newFunc, PostCacheStore(), scopeAllocs, scopeInstructions, and unwrapM().

Referenced by getDynamicLoopLimit().

◆ dumpScope()

|

inline |

Print out all currently cached values.

Definition at line 201 of file CacheUtility.h.

References scopeMap.

◆ erase()

|

virtual |

Erase this instruction both from LLVM modules and any local data-structures.

Reimplemented in GradientUtils.

Definition at line 55 of file CacheUtility.cpp.

References CustomErrorHandler, EmitFailure(), findInMap(), InternalError, newFunc, scopeAllocs, scopeFrees, scopeInstructions, scopeMap, SE, and str().

◆ freeCache()

|

inlinevirtual |

If an allocation is requested to be freed, this subclass will be called to chose how and where to free it.

It is by default not implemented, falling back to an error. Subclasses who want to free memory should implement this function.

Reimplemented in DiffeGradientUtils.

Definition at line 375 of file CacheUtility.h.

Referenced by createCacheForScope().

◆ getCacheAlignment()

|

inline |

Definition at line 210 of file CacheUtility.h.

Referenced by createCacheForScope(), DiffeGradientUtils::freeCache(), getCachePointer(), and loadFromCachePointer().

◆ getCachePointer()

| Value * CacheUtility::getCachePointer | ( | llvm::Type * | T, |

| bool | inForwardPass, | ||

| llvm::IRBuilder<> & | BuilderM, | ||

| LimitContext | ctx, | ||

| llvm::Value * | cache, | ||

| bool | storeInInstructionsMap, | ||

| const llvm::ValueToValueMapTy & | available, | ||

| llvm::Value * | extraSize ) |

Given an allocation specified by the LimitContext ctx and cache, compute a pointer that can hold the underlying type being cached.

This value should be computed at BuilderM. Optionally, instructions needed to generate this pointer can be stored in scopeInstructions

Definition at line 1481 of file CacheUtility.cpp.

References CacheUtility::LimitContext::Block, CreateAllocation(), EfficientBoolCache, getCacheAlignment(), getSubLimits(), lookupM(), newFunc, and scopeInstructions.

Referenced by lookupValueFromCache().

◆ getContext()

| bool CacheUtility::getContext | ( | llvm::BasicBlock * | BB, |

| LoopContext & | loopContext, | ||

| bool | ReverseLimit ) |

Given a BasicBlock BB in newFunc, set loopContext to the relevant contained loop and return true.

If BB is not in a loop, return false

Definition at line 496 of file CacheUtility.cpp.

References assumeDynamicLoopOfSizeOne(), CanonicalizeLatches(), MustExitScalarEvolution::computeExitLimit(), EfficientMaxCache(), EmitWarning(), FindCanonicalIV(), findInMap(), getDynamicLoopLimit(), getExitBlocks(), getLatches(), MustExitScalarEvolution::GuaranteedUnreachable, inversionAllocs, LI, loopContexts, newFunc, and SE.

Referenced by getSubLimits().

◆ getDynamicLoopLimit()

| llvm::AllocaInst * CacheUtility::getDynamicLoopLimit | ( | llvm::Loop * | L, |

| bool | ReverseLimit = true ) |

Definition at line 463 of file CacheUtility.cpp.

References createCacheForScope(), loopContexts, newFunc, and storeInstructionInCache().

Referenced by getContext().

◆ getSubLimits()

| CacheUtility::SubLimitType CacheUtility::getSubLimits | ( | bool | inForwardPass, |

| llvm::IRBuilder<> * | RB, | ||

| LimitContext | ctx, | ||

| llvm::Value * | extraSize = nullptr ) |

Given a LimitContext ctx, representing a location inside a loop nest, break each of the loops up into chunks of loops where each chunk's number of iterations can be computed at the chunk preheader.

Every dynamic loop defines the start of a chunk. SubLimitType is a vector of chunk objects. More specifically it is a vector of { # iters in a Chunk (sublimit), Chunk } Each chunk object is a vector of loops contained within the chunk. For every loop, this returns pair of the LoopContext and the limit of that loop Both the vector of Chunks and vector of Loops within a Chunk go from innermost loop to outermost loop.

Definition at line 1109 of file CacheUtility.cpp.

References LoopContext::allocLimit, LoopContext::antivaralloc, AttemptFullUnwrap, AttemptFullUnwrapWithLookup, CacheUtility::LimitContext::Block, LoopContext::dynamic, EmitWarning(), LoopContext::exitBlocks, CacheUtility::LimitContext::ForceSingleIteration, getContext(), LoopContext::header, LoopContext::incvar, LoopContext::maxLimit, newFunc, LoopContext::offset, LoopContext::parent, LoopContext::preheader, CacheUtility::LimitContext::ReverseLimit, LoopContext::trueLimit, unwrapM(), and LoopContext::var.

Referenced by createCacheForScope(), and getCachePointer().

◆ isInstructionUsedInLoopInduction()

|

inline |

Return whether the given instruction is used as necessary as part of a loop context This includes as the canonical induction variable or increment.

Definition at line 187 of file CacheUtility.h.

References loopContexts.

◆ loadFromCachePointer()

|

protected |

Perform the final load from the cache, applying requisite invariant group and alignment.

Definition at line 1586 of file CacheUtility.cpp.

References CacheLookups, getCacheAlignment(), and newFunc.

Referenced by lookupValueFromCache().

◆ lookupM()

|

pure virtual |

High-level utility to get the value an instruction at a new location specified by BuilderM.

Unlike unwrap, this function can never fail – falling back to creating a cache if necessary. This function is prepopulated with a set of values that are already known to be available and may contain optimizations for getting the value in more efficient ways (e.g. unwrap'ing when legal, looking up equivalent values, etc). This high-level utility should be implemented based off the low-level caching infrastructure provided in this class.

Implemented in GradientUtils.

Referenced by getCachePointer().

◆ lookupValueFromCache()

| Value * CacheUtility::lookupValueFromCache | ( | llvm::Type * | T, |

| bool | inForwardPass, | ||

| llvm::IRBuilder<> & | BuilderM, | ||

| LimitContext | ctx, | ||

| llvm::Value * | cache, | ||

| bool | isi1, | ||

| const llvm::ValueToValueMapTy & | available, | ||

| llvm::Value * | extraSize = nullptr, | ||

| llvm::Value * | extraOffset = nullptr ) |

Given an allocation specified by the LimitContext ctx and cache, lookup the underlying cached value.

Definition at line 1614 of file CacheUtility.cpp.

References EfficientBoolCache, getCachePointer(), and loadFromCachePointer().

◆ replaceAWithB()

|

virtual |

Replace this instruction both in LLVM modules and any local data-structures.

Reimplemented in GradientUtils.

Definition at line 91 of file CacheUtility.cpp.

References insert_or_assign2(), scopeInstructions, scopeMap, and storeInstructionInCache().

◆ storeInstructionInCache() [1/2]

| void CacheUtility::storeInstructionInCache | ( | LimitContext | ctx, |

| llvm::Instruction * | inst, | ||

| llvm::AllocaInst * | cache, | ||

| llvm::MDNode * | TBAA = nullptr ) |

Given an allocation defined at a particular ctx, store the instruction in the cache right after the instruction is executed.

Definition at line 1453 of file CacheUtility.cpp.

References CacheUtility::LimitContext::Block, getFast(), getNextNonDebugInstruction(), and storeInstructionInCache().

◆ storeInstructionInCache() [2/2]

| void CacheUtility::storeInstructionInCache | ( | LimitContext | ctx, |

| llvm::IRBuilder<> & | BuilderM, | ||

| llvm::Value * | val, | ||

| llvm::AllocaInst * | cache, | ||

| llvm::MDNode * | TBAA = nullptr ) |

Given an allocation defined at a particular ctx, store the value val in the cache at the location defined in the given builder.

Referenced by getDynamicLoopLimit(), replaceAWithB(), and storeInstructionInCache().

◆ unwrapM()

|

pure virtual |

High-level utility to "unwrap" an instruction at a new location specified by BuilderM.

Depending on the mode, it will either just unwrap this instruction, all of its instructions operands, and optionally lookup values when it is not legal to unwrap. If a value cannot be unwrap'd at a given location, this will null. This high-level utility should be implemented based off the low-level caching infrastructure provided in this class.

Implemented in GradientUtils.

Referenced by createCacheForScope(), and getSubLimits().

Member Data Documentation

◆ AC

|

protected |

Definition at line 155 of file CacheUtility.h.

◆ CacheLookups

|

protected |

Definition at line 419 of file CacheUtility.h.

Referenced by loadFromCachePointer().

◆ DT

| llvm::DominatorTree CacheUtility::DT |

Definition at line 151 of file CacheUtility.h.

◆ inversionAllocs

| llvm::BasicBlock* CacheUtility::inversionAllocs |

Definition at line 161 of file CacheUtility.h.

Referenced by CacheUtility(), createCacheForScope(), EnzymeGradientUtilsAllocationBlock(), getContext(), DiffeGradientUtils::getDifferential(), AdjointGenerator::handleMPI(), AdjointGenerator::MPI_COMM_RANK(), AdjointGenerator::MPI_COMM_SIZE(), AdjointGenerator::MPI_TYPE_SIZE(), and AdjointGenerator::visitOMPCall().

◆ LI

|

protected |

Definition at line 154 of file CacheUtility.h.

Referenced by getContext().

◆ loopContexts

|

protected |

Map of Loop to requisite loop information needed for AD (forward/reverse induction/etc)

Definition at line 177 of file CacheUtility.h.

Referenced by getContext(), getDynamicLoopLimit(), and isInstructionUsedInLoopInduction().

◆ newFunc

| llvm::Function* const CacheUtility::newFunc |

The function whose instructions we are caching.

Definition at line 147 of file CacheUtility.h.

Referenced by CacheUtility(), AdjointGenerator::createBinaryOperatorAdjoint(), AdjointGenerator::createBinaryOperatorDual(), createCacheForScope(), DiffeGradientUtils::CreateFromClone(), AdjointGenerator::createSelectInstAdjoint(), DiffeGradientUtils::diffe(), AdjointGenerator::DifferentiableMemCopyFloats(), erase(), DiffeGradientUtils::freeCache(), getCachePointer(), getContext(), getDynamicLoopLimit(), getSubLimits(), AdjointGenerator::handleAdjointForIntrinsic(), AdjointGenerator::handleKnownCallDerivatives(), AdjointGenerator::handleMPI(), loadFromCachePointer(), AdjointGenerator::recursivelyHandleSubfunction(), DiffeGradientUtils::setDiffe(), AdjointGenerator::visitAtomicRMWInst(), AdjointGenerator::visitCastInst(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitExtractElementInst(), AdjointGenerator::visitExtractValueInst(), AdjointGenerator::visitInsertElementInst(), AdjointGenerator::visitInsertValueInst(), AdjointGenerator::visitLoadInst(), AdjointGenerator::visitLoadLike(), AdjointGenerator::visitMemSetCommon(), AdjointGenerator::visitMemTransferCommon(), AdjointGenerator::visitOMPCall(), and AdjointGenerator::visitShuffleVectorInst().

◆ scopeAllocs

|

protected |

A map of allocations to a set of instructions which allocate memory as part of the cache.

Definition at line 327 of file CacheUtility.h.

Referenced by createCacheForScope(), and erase().

◆ scopeFrees

|

protected |

A map of allocations to a set of instructions which free memory as part of the cache.

Definition at line 321 of file CacheUtility.h.

Referenced by erase(), and DiffeGradientUtils::freeCache().

◆ scopeInstructions

|

protected |

A map of allocations to a vector of instruction used to create by the allocation Keeping track of these values is useful for deallocation.

This is stored as a vector explicitly to order theses instructions in such a way that they can be erased by iterating in reverse order.

Definition at line 316 of file CacheUtility.h.

Referenced by createCacheForScope(), erase(), getCachePointer(), and replaceAWithB().

◆ scopeMap

|

protected |

A map of values being cached to their underlying allocation/limit context.

Definition at line 308 of file CacheUtility.h.

Referenced by dumpScope(), erase(), and replaceAWithB().

◆ SE

|

protected |

Definition at line 156 of file CacheUtility.h.

Referenced by erase(), and getContext().

◆ TLI

| llvm::TargetLibraryInfo& CacheUtility::TLI |

Various analysis results of newFunc.

Definition at line 150 of file CacheUtility.h.

Referenced by DiffeGradientUtils::CreateFromClone(), AdjointGenerator::handleKnownCallDerivatives(), isAllocationCall(), isAllocationFunction(), isDeallocationCall(), isDeallocationFunction(), AdjointGenerator::visitCallInst(), AdjointGenerator::visitCommonStore(), AdjointGenerator::visitMemSetCommon(), and zeroKnownAllocation().

The documentation for this class was generated from the following files:

Generated on Fri May 8 2026 19:56:26 for Enzyme by